AI for Enterprise

What Is Agentic AI? A Definition Built for Enterprise Buyers

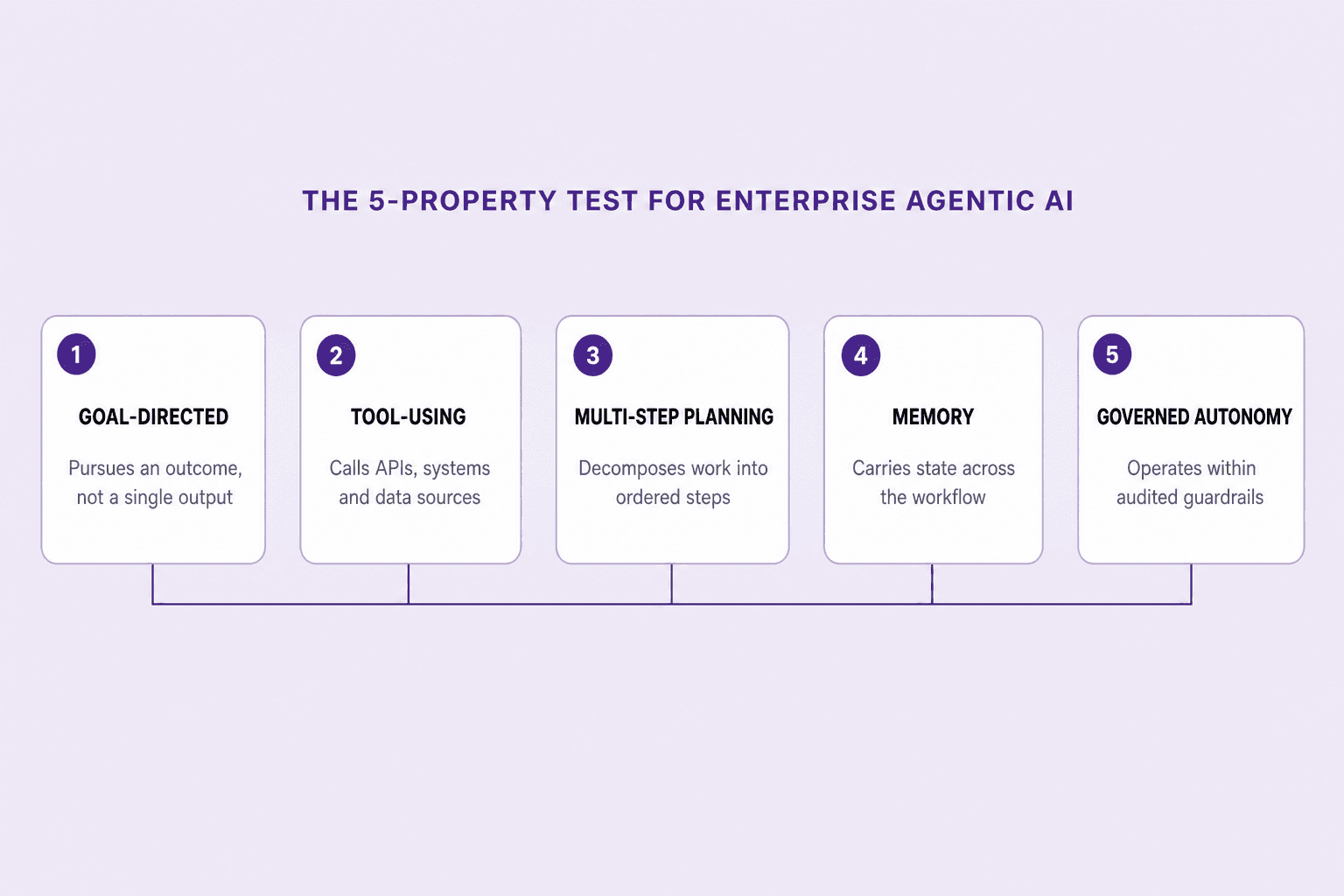

Agentic AI is the class of AI systems that pursue goals — planning, calling tools, holding memory, and acting under audited guardrails. Net0's enterprise definition is a five-property test that separates real agents from rebranded chatbots, copilots, and RPA.

Sofia Fominova

May 1, 2026

TL;DR

Agentic AI is the class of AI systems that pursue goals rather than answer prompts — they plan, call tools, retain memory across steps, and act under audited guardrails. For enterprise buyers, the working definition is stricter than the open-web one: an agent must be goal-directed, tool-using, multi-step, stateful, and governed, or it is a chatbot wearing a new label.

Key Takeaways

Agentic AI is goal-directed, not response-driven. A useful enterprise definition rests on five testable properties — goals, tool use, planning, memory, and governed autonomy. Anything missing one of the five is a chatbot, a copilot, or RPA.

Adoption is real but uneven. McKinsey's November 2025 State of AI survey of nearly 1,500 organisations found 23% of respondents are scaling at least one agentic AI system and 39% are experimenting; in any given business function, no more than 10% have reached scaled deployment (McKinsey, 2025).

Most projects will fail. Gartner forecasts that over 40% of agentic AI projects will be cancelled by the end of 2027, citing escalating costs, unclear value, and inadequate risk controls (Gartner, June 2025).

"Agent washing" is a measurable risk. Gartner has explicitly warned of vendors rebranding chatbots, RPA scripts, and assistants as agents without delivering autonomous capability — a rebrand that buyers absorb at full price.

Governance is now part of the definition. EU AI Act high-risk obligations under Annex III take effect on 2 August 2026, with penalties of up to €15 million or 3% of global annual turnover for non-compliance (European Commission, 2024). An agent that cannot log every action, accept human oversight, or be halted is not deployable in a regulated workflow.

Architecture, not capability, is the bottleneck. IBM's Institute for Business Value survey of 1,000 senior executives found that fewer than one-third of organisations have implemented the interoperability and scalability features needed to scale agentic AI (IBM IBV, 2025).

The buyer's question is not "what is an agent" — it is "what is a defensible agent." Enterprise and government buyers need a definition that ties capability to evidence: per-action audit logs, identity scoping, replannable plans, and a kill-switch. Net0's three-layer architecture is built to that test.

Introduction

Net0 is an AI infrastructure company that builds AI solutions for governments and global enterprises. Across enterprise AI engagements, government AI transformation programmes, and AI for sustainability deployments, the same question now arrives at every procurement: what is agentic AI, and what counts as one.

Agentic AI is the class of artificial-intelligence systems that pursue goals autonomously — selecting tools, planning multi-step work, holding state across the workflow, and acting under audited guardrails. The definition matters because it determines whether a system is a productivity feature or an operating partner, and whether it falls inside or outside the EU AI Act's high-risk obligations that begin enforcement on 2 August 2026.

Enterprise and government buyers cannot afford the loose, marketing-led definitions in circulation. McKinsey's 2025 survey shows 62% of organisations are at least experimenting with AI agents, but only one-third of those have anything in production, and Gartner expects more than four in ten of today's agentic projects to be cancelled by 2027. The gap between "deployed" and "defensible" is the whole story. This article supplies a stricter working definition, distinguishes agentic AI from the categories it is most often confused with, sets out the architectural substrate it requires, and explains why those constraints are what make the category investable.

What Agentic AI Is — A Five-Property Test

The clearest way to define agentic AI is by what it must do, not by what it is built from. A system qualifies as agentic for an enterprise buyer if and only if it satisfies all five of the following properties.

Goal-directed. The system is given an outcome — close the books, screen the supplier, route the citizen request — not a single instruction. It chooses the next action based on whether it advances that outcome. A model that produces a piece of text in response to a prompt is responding; a model that decides which tool to call next in service of an objective is acting.

Tool-using. The system reaches outside its own context to read and write through real interfaces — APIs, ERP modules, retrieval indexes, ticketing systems, code execution sandboxes, and identity-scoped service accounts. Without tool use, an agent is a stateless reasoning engine; with it, it becomes capable of effect in the operating environment.

Multi-step planning. The system decomposes the goal into a sequence of actions, executes them, observes results, and replans on failure. Planning at runtime — not a fixed script encoded by an engineer — is what distinguishes agentic AI from RPA and from prompt-chained workflows.

Memory. The system retains state across steps and, where appropriate, across sessions. Short-term memory holds the working plan and intermediate results; long-term memory holds context — the organisation's terminology, the customer's history, the regulatory framework in scope. Stateless one-turn calls cannot replan, cannot recover from failure, and cannot be audited usefully.

Governed autonomy. The system operates within an explicit envelope of permissions, scopes, and human-oversight checkpoints. Autonomy without governance is not agency; it is unmanaged software. A defensible agent has identity-scoped access, policy-as-code constraints, per-action logging, and a kill-switch that revokes its operating privileges in seconds.

A system that fails any one of these tests is not agentic. The point of the test is not academic. Gartner's analysts have warned of "agent washing" — vendors rebranding existing chatbots, automation tools, and assistants as agents without delivering genuine autonomous capability — and procurement teams paying agent-tier prices for chatbot-tier capability is now a measurable failure mode in the market.

Agentic AI vs. Chatbots, Copilots, and RPA

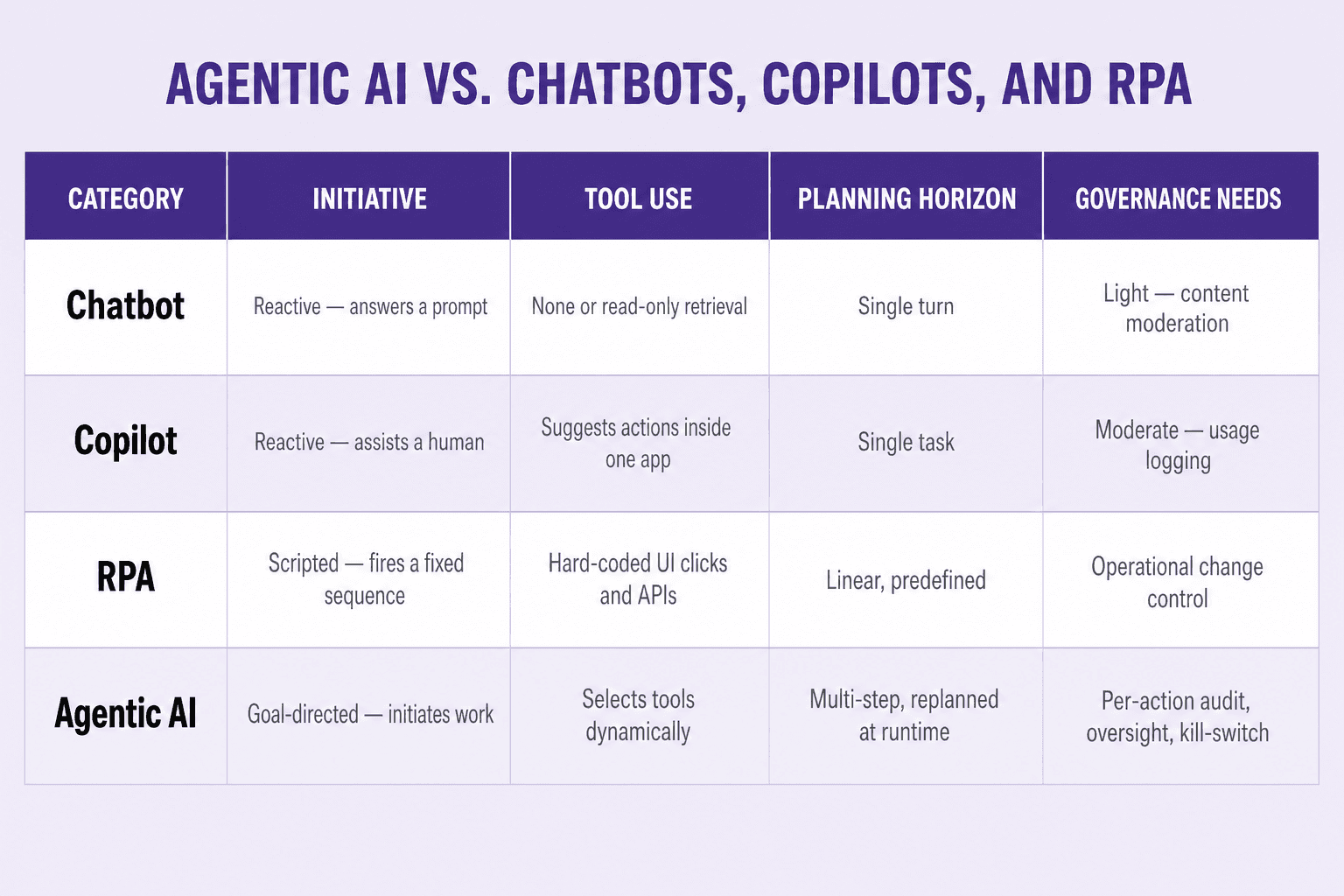

The five-property test makes the category boundaries explicit. The categories agentic AI is most often confused with — chatbots, copilots, and RPA — each fail one or more properties, in characteristic ways.

A chatbot answers a prompt and stops. It is reactive, single-turn, and rarely uses tools beyond read-only retrieval. Governance for a chatbot reduces to content moderation and PII redaction. The IBM Institute for Business Value notes that customer service is among the earliest adopters of true agents — but the bots that have lived on enterprise websites for the last decade are not what those agents are replacing on the inside.

A copilot assists a human user inside a single application. It suggests actions, drafts content, completes code, and accelerates the human's workflow, but the human remains the planner. The copilot's tool surface is bounded by the host application, and its governance need is moderate — usage logging and entitlement checks. Forrester describes the shift from copilots to true agents as the move from "assistive" to "autonomous"; the transition is material because it changes who initiates work.

RPA automates a fixed sequence of UI clicks and API calls against a known process. It is scripted, not planned — an RPA bot does not decide whether to take the next step; it is told. Governance for RPA is operational change control: someone reviews the script before it runs in production. RPA is excellent at stable, high-volume workflows and brittle at anything that requires interpretation.

Agentic AI is none of those. It initiates work in service of an outcome, selects tools dynamically, plans and replans at runtime, and operates under per-action audit, oversight, and kill-switch controls. The HFS Research definition — adopted by IBM in its 2026 agentic services analysis — captures the same point: agentic systems "perceive context, plan goal-driven actions, and execute them at scale with trust, while humans set objectives, policy guardrails, and success criteria" (IBM HFS Horizons, 2026).

The practical implication for buyers is that the comparison matters at procurement time, not just at strategy time. Many vendors offering "agents" today are selling sophisticated copilots, augmented chatbots, or LLM-prompted RPA — useful, but priced and governed as a different category than what is actually delivered. The five-property test cuts through that. The same distinction is the spine of Net0's agentic government glossary for public-sector buyers, where the question of what counts as agentic government is now binding national policy in the GCC.

The Architectural Substrate of Agentic AI

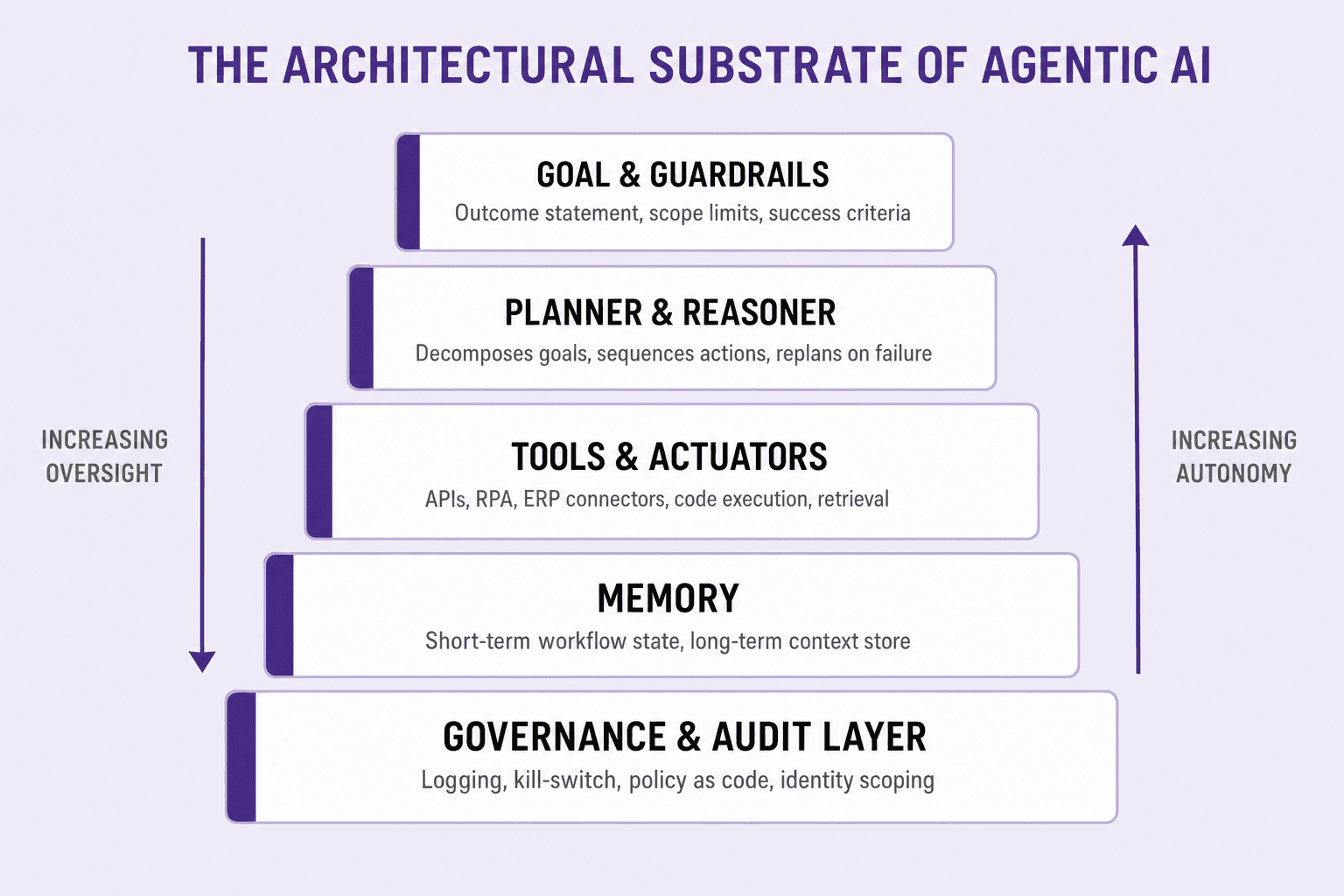

Agentic capability is not produced by a single foundation model. It is produced by a layered architecture in which the model is one component of a stack that handles goals, planning, tools, memory, and governance. Anything described as "agentic AI" without that stack is description without delivery.

Goal and guardrails. The agent is initialised with an outcome statement — what success looks like, what is in and out of scope, what failure modes are unacceptable. This layer translates the buyer's intent into machine-readable constraints, and is the single most under-built component in early agentic deployments.

Planner and reasoner. A planning module decomposes the goal into ordered actions, sequences them, monitors execution, and revises the plan when an action fails or returns unexpected output. This is where reasoning models, planning algorithms, and orchestration frameworks live. McKinsey's analysis of agentic AI describes these systems as "based on foundation models capable of acting in the real world, planning and executing multiple steps in a workflow" — the planner is the layer that does the acting and the executing, not the underlying language model.

Tools and actuators. The agent's reach is defined by its tools: APIs, ERP connectors, RPA bridges into legacy systems, retrieval indexes, code-execution environments, and document-intelligence pipelines. Tool use is identity-scoped — every call is signed under a service identity that the governance layer can audit and revoke.

Memory. Short-term workflow state holds the plan, intermediate results, and decision rationale across the steps of a single goal. Long-term context — organisation taxonomy, customer history, regulatory ontology — is held in retrieval-augmented memory. Forrester's research on enterprise agentic AI notes that the gap is not model capability but "accumulated context debt" — fragmented data estates, inconsistent taxonomies, and data infrastructure designed for human dashboards, not autonomous agents (Forrester, 2026).

Governance and audit layer. Beneath every other layer sits the governance substrate: per-action logging, identity-scoped permissions, policy-as-code rules, human-in-the-loop checkpoints for high-stakes decisions, anomaly detection, and the ability to revoke an agent's operating privileges within seconds. This is where regulatory compliance lives, and it is what most retrofitted agent platforms cannot produce on demand.

The same architectural reasoning is the foundation of Net0's three-layer platform. As covered in Inside Net0's AI-First Architecture, Layer 2 (proprietary AI models) and Layer 3 (more than 60 modular components) are the agentic substrate, while Layer 1 — the AI data platform — is the system of record that feeds reliable context into the reasoning loop. Owning the model layer end-to-end is what makes audited deployment possible, an argument why Net0 builds its own AI models treats in detail.

Why Enterprise Buyers Need a Stricter Definition

Most "what is agentic AI" content is written for a general audience. Enterprise and government buyers operate under a different set of constraints, and those constraints are what tighten the definition.

Failure cost is asymmetric. Gartner's June 2025 analysis attributes the projected 40% cancellation rate to escalating costs, unclear business value, and inadequate risk controls — three failure modes that a general definition does nothing to prevent. A stricter five-property test surfaces the gap before procurement, not after.

Regulation is now binding. EU AI Act Article 12 requires automatic logging of every action over the system's lifetime, retained for at least six months. Article 14 requires designed-in human oversight that lets a human override, disregard, or reverse any output, and physically halt the system via a stop procedure. Article 9 requires continuous risk management across the entire AI system lifecycle. None of those obligations are produced by deploying a foundation-model API. They are properties of the agentic stack as a whole, and they take effect on 2 August 2026 for any system deemed high-risk under Annex III — which, as compliance teams are now discovering, includes far more agentic deployments than the eight named categories suggest.

Audit-defensibility is non-optional. For regulated industries — financial services, healthcare, public-sector workflows, ESG disclosure — every agent action eventually becomes an audit object. A definition of agentic AI that does not include per-action behavioural logging traceable to specific outputs, retained for at least six months, in a format a regulator can read, fails on contact with a real audit. That is the shape of the actual obligation, not a marketing concession.

Context debt is real. IBM's 2025 survey found that fewer than half of enterprises report scaling or optimising on any single critical element of an agentic operating model — infrastructure, governance, or workforce readiness. The bottleneck is not the model; it is the platform around the model. Buyers who treat agentic AI as a product purchase rather than an architectural commitment are statistically likely to land in Gartner's 40%.

Sovereignty is a procurement constraint. Government buyers and regulated enterprises in jurisdictions like the UAE, Saudi Arabia, and the EU need to run agents inside the regulatory perimeter — model weights, inference, logs, and memory all in-jurisdiction. That is incompatible with a vendor-hosted API that calls a US-resident foundation model. The definition of "deployable agentic AI" in those markets must include sovereign and hybrid deployment as defaults, not options.

The general-audience definition of agentic AI is "AI that takes action." The enterprise definition is "AI that takes auditable, scoped, replannable, governed action under a service identity, in a deployment that satisfies the customer's regulatory perimeter." The second definition is the one that determines whether the system is investable.

Where Net0 Fits

Net0's enterprise AI solutions, government AI transformation programmes, and AI for sustainability deployments are built on a three-layer AI-first architecture explicitly designed for the strict definition of agentic AI laid out above. The same stack supports finance close acceleration, supplier-risk monitoring, AI for finance operations, citizen-service routing, regulatory compliance reporting, and climate risk analytics — and is delivered end-to-end rather than as an off-the-shelf SaaS product.

Three architectural commitments make Net0's agentic deployments defensible by construction. First, the AI data platform ingests, normalises, and governs operational data from over 10,000 enterprise systems, providing the system of record that planning and memory layers depend on — without it, agents reason against fragmented context. Second, proprietary AI models are owned and operated by Net0, fine-tuned or fully custom-built on customer data during delivery, which makes sovereign and hybrid deployment a procurement default, not an exception. Third, modular components — more than 60 in the Layer 3 library, plus custom builds where the customer's use case has no existing match — sit behind a unified governance layer with per-action logging, identity-scoped permissions, and human-in-the-loop checkpoints from day one.

Across more than 400 entities on four continents, the same architectural and delivery principles apply. The output is agentic AI that passes the five-property test, satisfies the governance obligations of the EU AI Act and analogous regional frameworks, and slots into existing enterprise and government workflows under the customer's regulatory perimeter.

Book a demo to scope a custom-configured agentic AI deployment against your specific operating environment, regulatory jurisdiction, and audit requirements. The same stack that powers Net0's AI-first sustainability platform and government AI infrastructure is the one that runs agentic enterprise workflows in finance, procurement, supply chain, and risk.

FAQ

What is agentic AI in simple terms?

Agentic AI is the class of AI systems that pursue a goal rather than answer a single prompt. They plan a sequence of actions, call external tools and systems, retain memory across steps, and operate under explicit oversight. A practical test is whether the system can be given an outcome and trusted to choose the next action — if not, it is a chatbot, a copilot, or scripted automation.

How is agentic AI different from a chatbot or a copilot?

A chatbot reacts to a prompt and stops; it has no goals beyond the current message. A copilot assists a human inside one application — the human is still the planner. Agentic AI initiates work, selects tools dynamically, plans across multiple steps, and operates under per-action audit and oversight. The five-property test — goal-directed, tool-using, multi-step, stateful, governed — is the cleanest way to tell them apart.

Is agentic AI the same as RPA?

No. RPA executes a fixed script of UI clicks and API calls; it does not decide what to do next. Agentic AI plans the sequence at runtime, replans on failure, and chooses tools based on the current state of the workflow. RPA can be one of the tools an agent uses, but a script is not an agent.

Why are most agentic AI projects expected to fail?

Gartner forecasts that over 40% of agentic AI projects will be cancelled by the end of 2027 due to escalating costs, unclear business value, and inadequate risk controls. The most common root causes are deploying agents without a strategy, layering them onto legacy processes designed for humans, and underinvesting in the governance and architectural layers needed for production scale.

What does the EU AI Act require for agentic AI?

For agentic systems classified as high-risk under Annex III, the EU AI Act requires continuous risk management (Article 9), high-quality data governance (Article 10), Annex IV technical documentation (Article 11), automatic logging retained for at least six months (Article 12), transparency for deployers (Article 13), designed-in human oversight with override and stop procedures (Article 14), and EU database registration. High-risk obligations take effect on 2 August 2026, with penalties of up to €15 million or 3% of global turnover for non-compliance.

What infrastructure does enterprise agentic AI need?

A defensible agentic deployment needs a layered stack: a goal and guardrail layer that translates intent into constraints; a planner and reasoner that sequences actions and replans on failure; tools and actuators with identity-scoped access to APIs and systems; memory that holds workflow state and long-term context; and a governance and audit layer beneath everything for logging, oversight, and kill-switch control. A foundation-model API on its own is not agentic AI.

What is "agent washing"?

Agent washing is the practice of rebranding existing chatbots, RPA scripts, and assistants as agentic AI without delivering genuine autonomous capability. Gartner has explicitly named it as a market risk, and it is one of the reasons buyers should apply a strict five-property test rather than rely on vendor labelling.

How does Net0 deliver agentic AI?

Net0 delivers agentic AI through a three-layer AI-first architecture — an AI data platform as the system of record, proprietary AI models that can be deployed in sovereign or hybrid configurations, and more than 60 modular components combined into a custom-configured or custom-built solution for each customer. Per-action audit logging, identity-scoped tool use, human-in-the-loop checkpoints, and policy-as-code controls are built in across all three layers, so deployments satisfy the strict five-property test and the EU AI Act's high-risk obligations from day one.