AI for Government

Agentic Government Glossary: 100+ AI Agent Terms Explained (2026)

An alphabetical reference of 120+ terms every public-sector AI leader should know in 2026 — AI agents, agentic workflows, sovereign AI, accreditation and oversight.

Sofia Fominova

Apr 22, 2026

TL;DR

An agentic government glossary defines the terms that appear across AI agent design, deployment, oversight and procurement for public-sector institutions. This 2026 edition from Net0 covers 120+ definitions used by government CIOs, policy leads, procurement officers and accreditation teams operating under the EU AI Act, the NIST AI Risk Management Framework, the Council of Europe Framework Convention on AI, and the Seoul Frontier AI Safety Commitments.

Key Takeaways

120+ terms covering AI agents, agentic workflows, governance, sovereignty and accreditation, written in plain government English.

Updated for 2026: the EU AI Act's high-risk obligations apply from 2 August 2026 (European Commission, 2024); the Council of Europe Framework Convention on AI entered signature in September 2024; the Model Context Protocol (MCP), released by Anthropic in November 2024, is now a de facto standard for agent tool integration.

Each definition is self-contained for quick reference — designed to be copied into tender documents, accreditation packs and programme-delivery guidance.

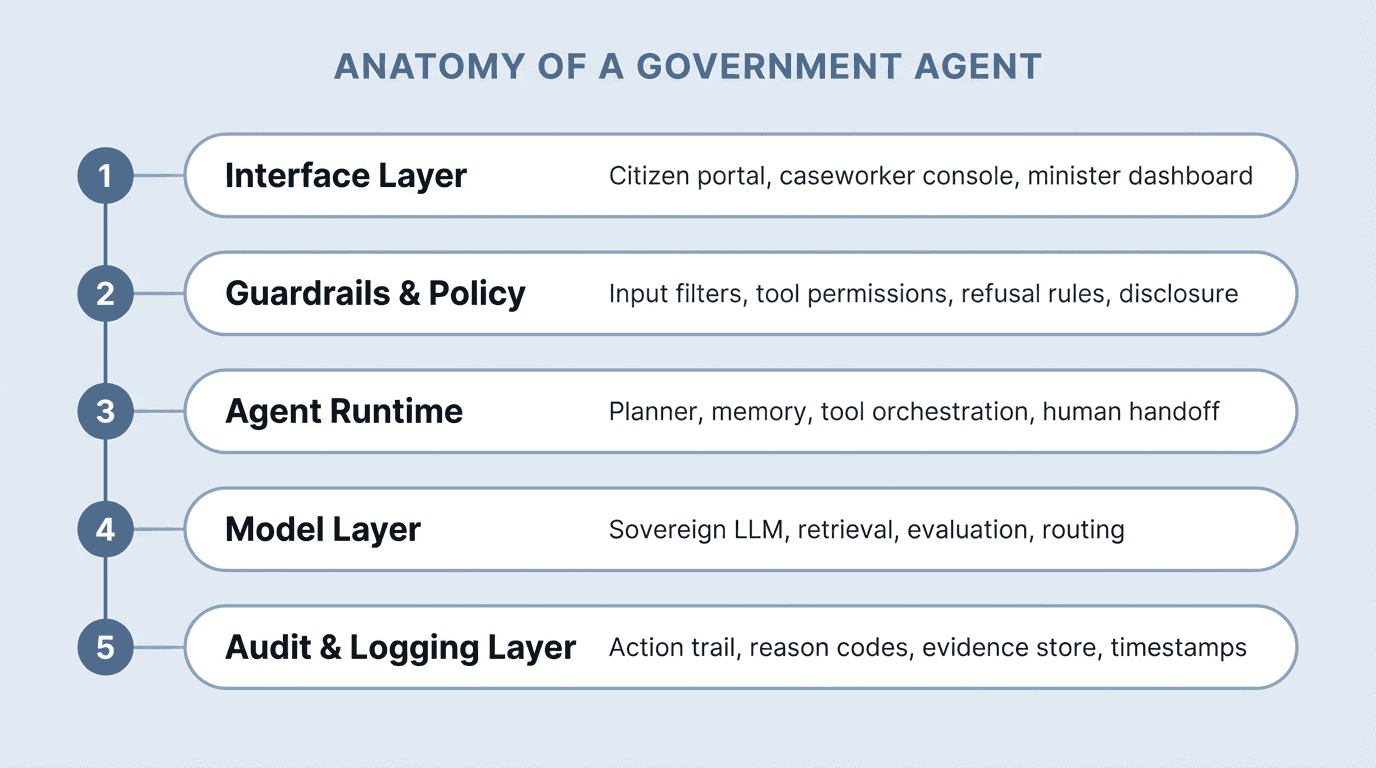

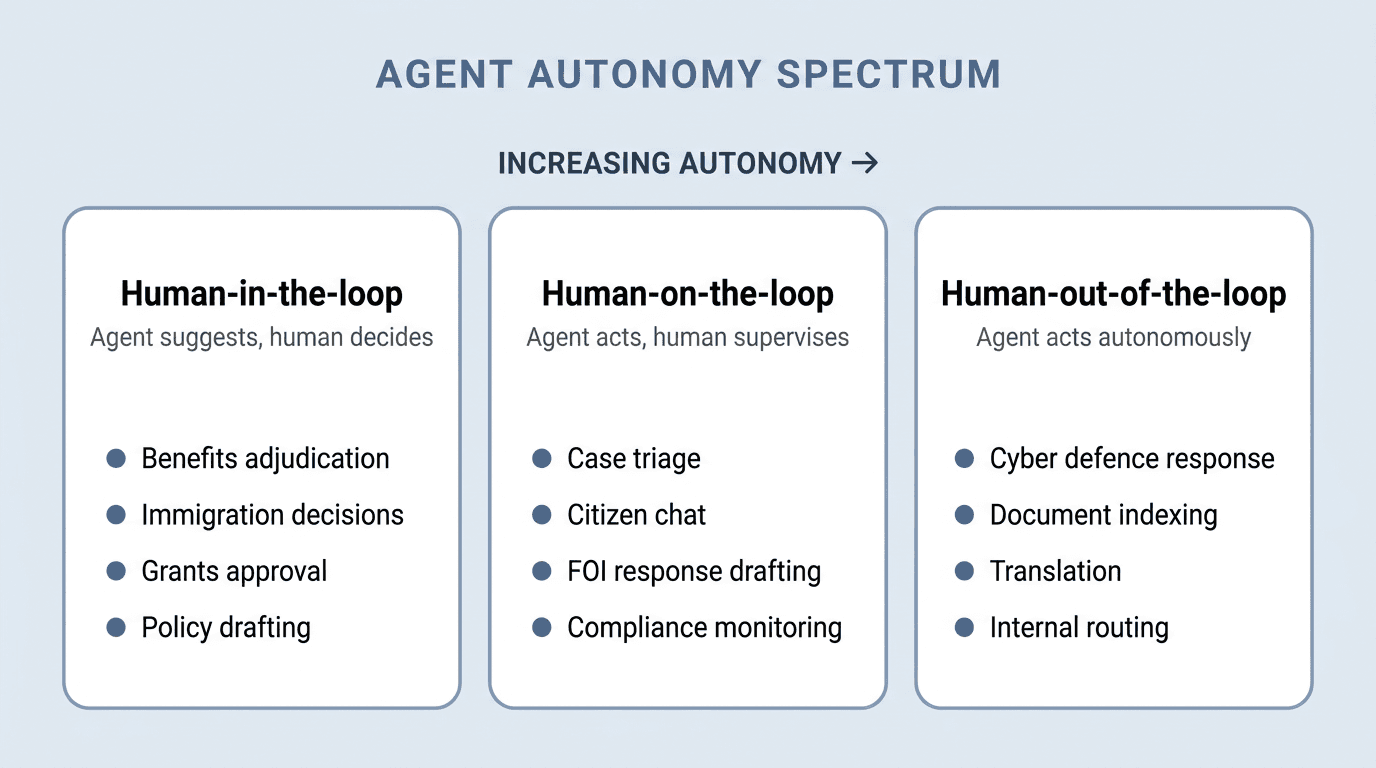

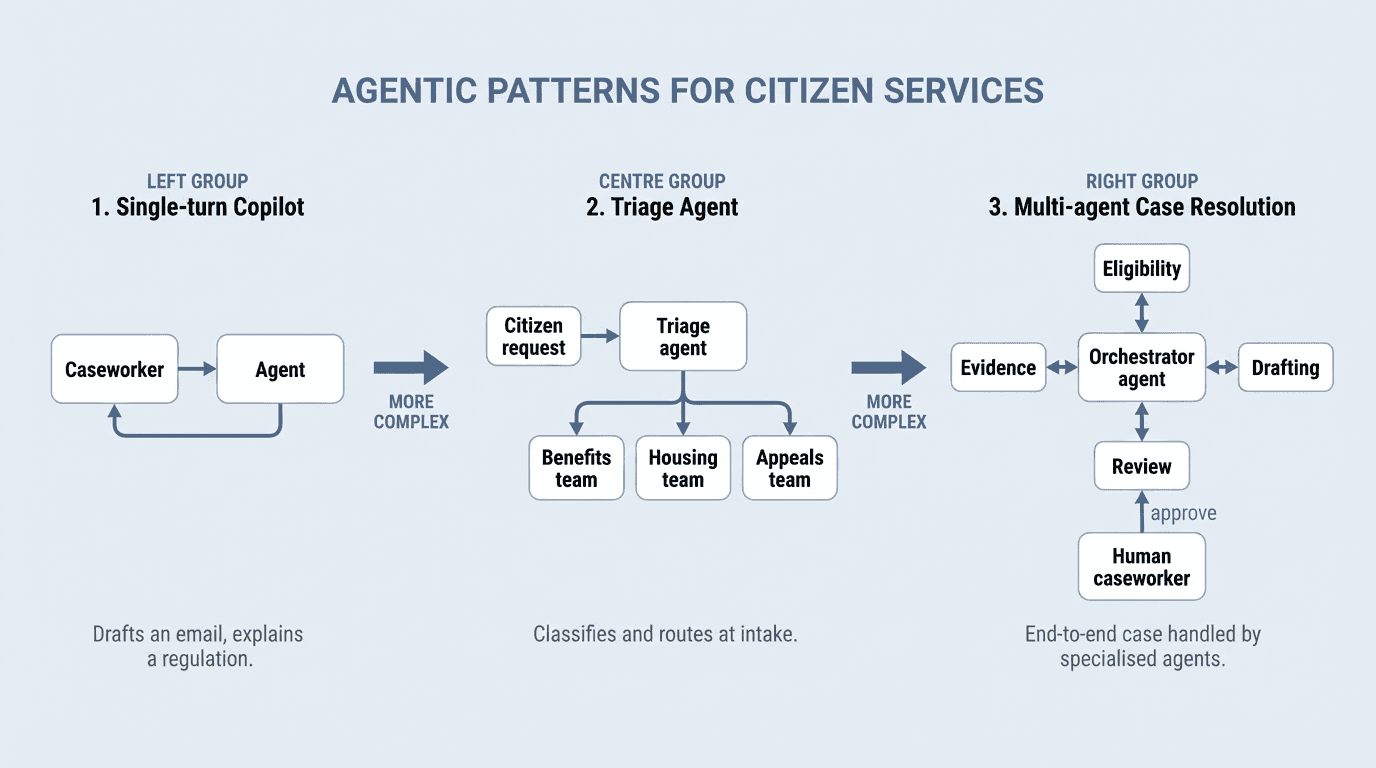

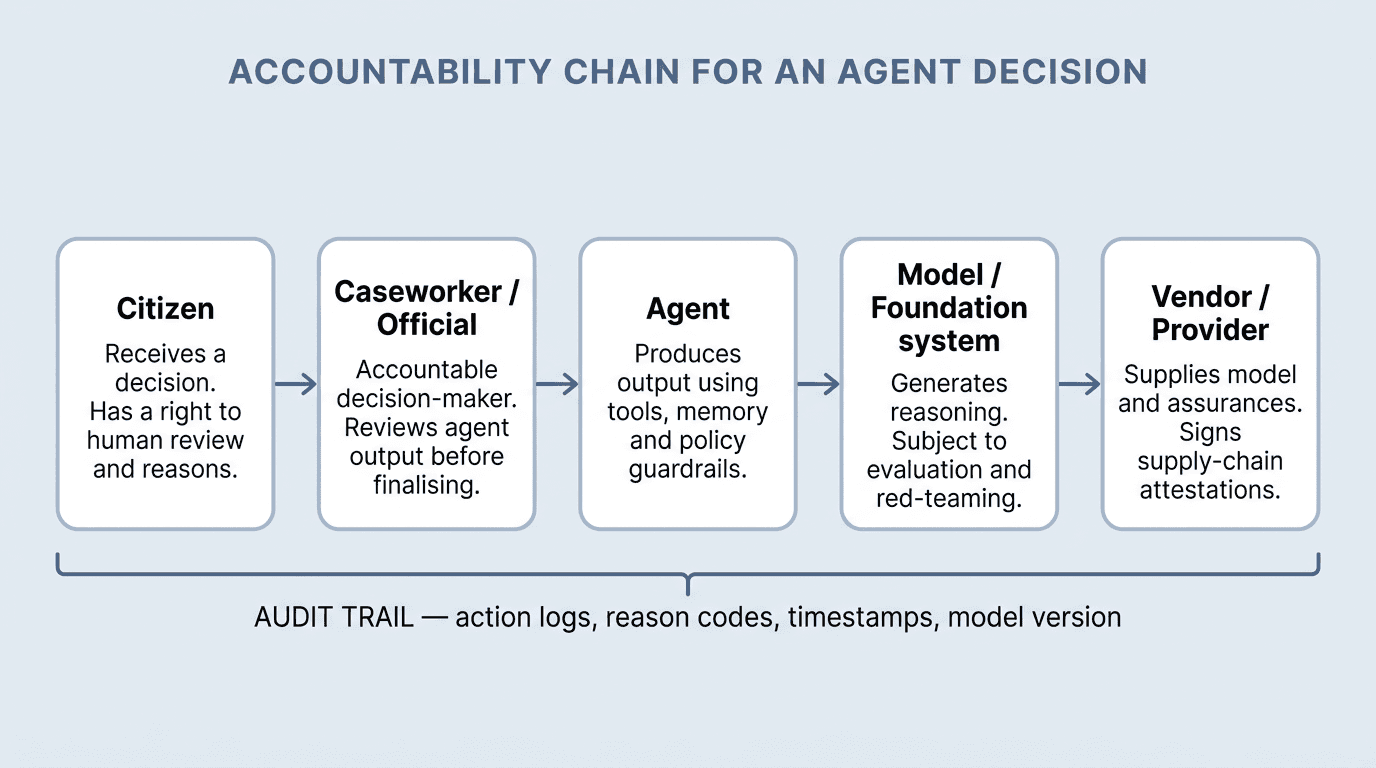

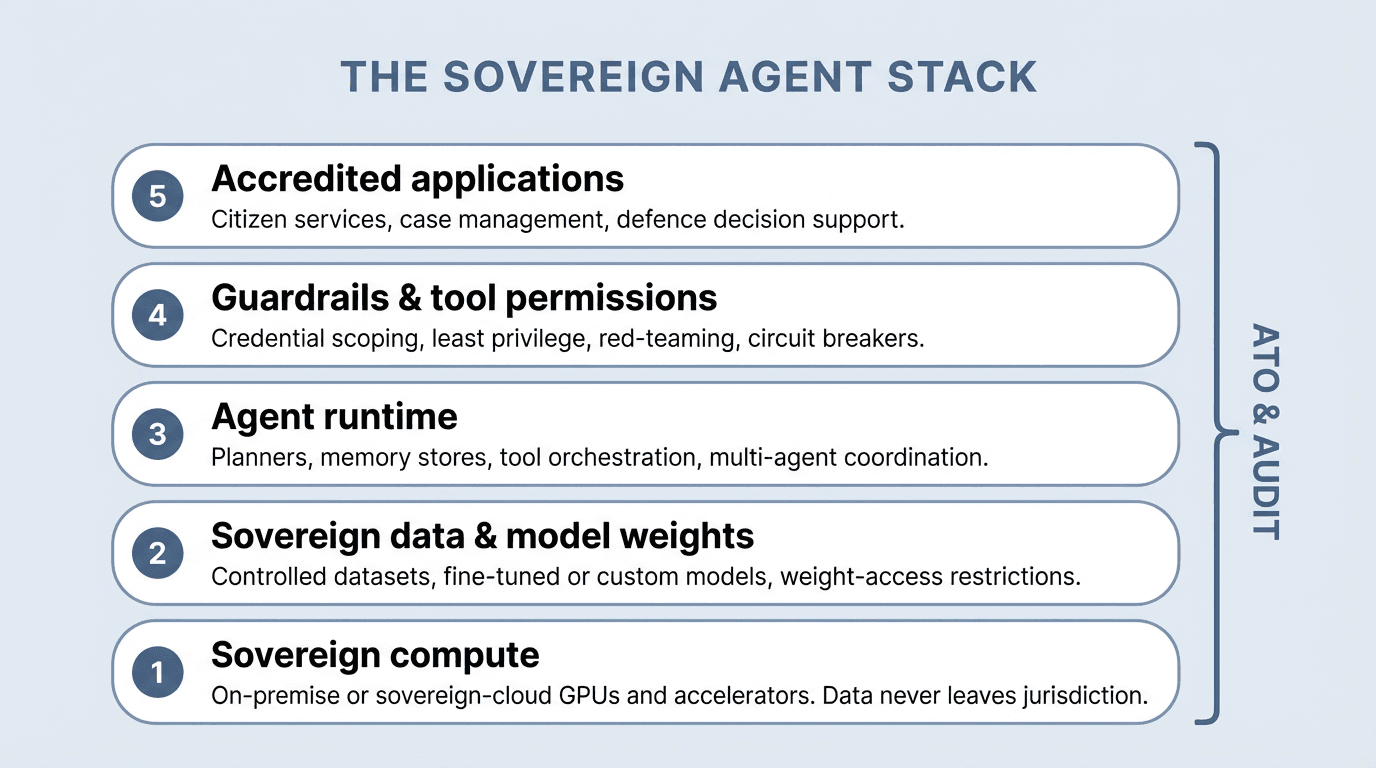

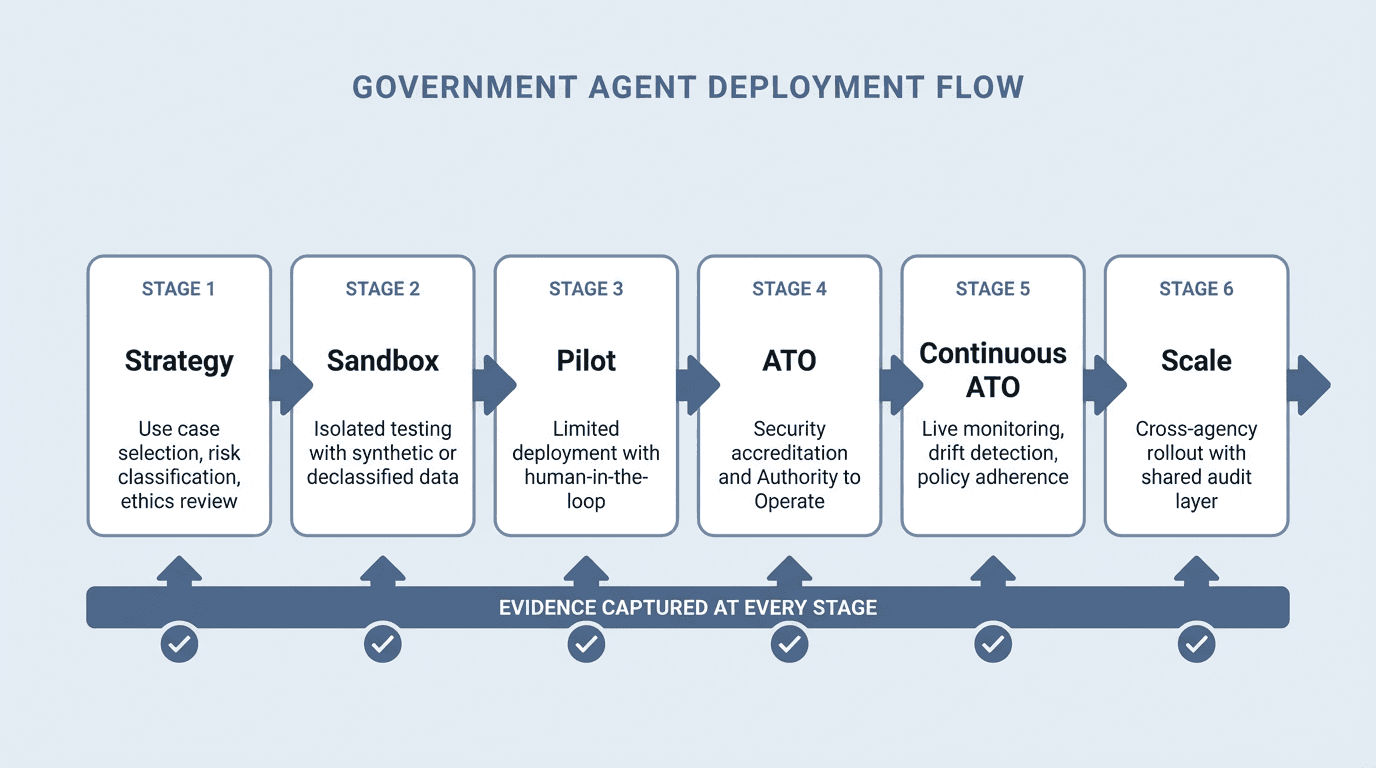

Six visual explainers cover agent anatomy, the autonomy spectrum, citizen-service patterns, the accountability chain, the sovereign agent stack and the government deployment flow.

Terms are cross-linked to longer explainers on the Net0 Government AI hub where a deeper reference exists.

Introduction

Net0 is an AI infrastructure company that builds AI solutions for governments and global enterprises, with a dedicated Government AI practice serving ministries, public-sector institutions and sovereign programmes across four continents. This agentic government glossary is one of the reference assets that programme teams rely on when standardising terminology across digital transformation, procurement, accreditation and policy.

Agents are no longer a research topic. By the end of 2025, 33% of enterprise software applications were expected to incorporate agentic AI, up from less than 1% in 2024 (Gartner, 2024 Emerging Tech Impact Radar). Governments are following — and because they operate inside a thicker regulatory perimeter, they need more precise vocabulary: not just what an agent is, but how it is accredited, how its actions are logged, who is accountable when it is wrong, and under what authority it may act on a citizen's behalf. This glossary assembles that vocabulary in one alphabetical reference.

How to use this glossary

The entries below are arranged alphabetically. Where a term has a dedicated Net0 explainer — for example Net0's AI-first architecture or the case for sovereign proprietary AI models — the term is linked inline. A sister reference, the Net0 Carbon Management Glossary, performs the same function for sustainability vocabulary.

Six visual explainers sit at key points in the alphabet: agent anatomy, autonomy levels, citizen-service patterns, the accountability chain, the sovereign agent stack, and the government deployment flow.

A

Accountability chain

The accountability chain is the ordered sequence of actors responsible for an agent's decision, from the citizen who receives the outcome through the accountable official, the agent itself, the underlying model and the vendor that supplies it. Each link produces evidence — reason codes, action logs, model versions, supply-chain attestations — that together make the decision auditable. In 2026, the EU AI Act, NIST AI RMF and Council of Europe Framework Convention on AI all require this chain to be demonstrable before a high-risk system can operate.

Accreditation

Accreditation is the formal process by which a government authority confirms that an AI system meets its security, privacy and operational requirements before it can be used in production. In the United States this is expressed as an Authority to Operate (ATO); in the UK through G-Cloud and Digital Marketplace pathways; in Australia through IRAP; in Canada through the ITSP framework. Agentic systems typically require additional accreditation steps covering tool permissions, memory handling and escalation policy.

Action logging

Action logging is the recording of every action an agent takes — tool call, external request, write to a record, message sent — with timestamp, inputs, outputs and the model version that produced it. It is the minimum audit artefact required to reconstruct an agent's behaviour after the fact. NIST AI RMF GOVERN 1.3 and the EU AI Act Article 12 both mandate automatic logging for high-risk systems.

Agent

An agent is an AI system that plans and executes multi-step tasks by combining a language or reasoning model with tools, memory and a control loop. Unlike a single-turn chatbot, an agent can decide which tool to call, interpret the result, adjust its plan, and repeat until a goal is met. Government agents operate under explicit policy constraints — tool permissions, data boundaries, human-approval gates — rather than with open autonomy.

Agentic workflow

An agentic workflow is a defined end-to-end process in which one or more agents collaborate with tools, data sources and human reviewers to complete a task. In government, typical workflows include benefits adjudication, FOI response drafting, licensing triage and inter-agency document routing. Designing the workflow — not the model — is where most programme risk and value sits.

AI Act (EU)

The EU AI Act (Regulation (EU) 2024/1689) is the European Union's horizontal AI law, in force since 1 August 2024. It classifies systems into four risk tiers — unacceptable, high, limited and minimal — and imposes obligations proportionate to tier. High-risk obligations, including risk management, data governance, human oversight, accuracy, robustness and cybersecurity, apply from 2 August 2026. Most government uses of AI that affect citizens fall inside the high-risk tier.

AI Commissioner / Chief AI Officer (government)

An AI Commissioner or government Chief AI Officer is the designated senior official accountable for AI deployment across a public-sector institution. The role emerged in response to the US OMB Memorandum M-24-10 (March 2024), which required federal agencies to appoint a CAIO, and has been echoed in the UK, Canada, Singapore and several EU member states. Responsibilities typically cover AI strategy, use-case inventories, risk assessment and cross-agency coordination.

AI export controls

AI export controls are government measures that restrict the transfer of AI models, model weights, compute hardware or related know-how to specified foreign destinations. The US Department of Commerce's January 2025 Framework for Artificial Intelligence Diffusion tiered global access to advanced AI chips and closed-weight frontier models. Similar controls exist in the EU Dual-Use Regulation and in UK and Japanese export regimes.

AI Safety Institute (UK AISI, US CAISI and partners)

AI Safety Institutes are government-backed bodies established to evaluate frontier AI models for systemic risk. The UK AI Safety Institute was announced at the Bletchley Summit in November 2023; the US AI Safety Institute was announced the same week. An International Network of AI Safety Institutes was formally launched at the 2024 Seoul Summit, adding institutes in Japan, Canada, Singapore, France and others.

AI Safety Summit

The AI Safety Summit is the recurring international meeting that coordinates government responses to frontier AI risk. Bletchley Park (November 2023) produced the Bletchley Declaration signed by 28 countries plus the EU; Seoul (May 2024) produced the Frontier AI Safety Commitments adopted by 16 leading developers; Paris (February 2025) reframed the series as the AI Action Summit and broadened the agenda to opportunity, public interest and sustainability.

AI sandbox

An AI sandbox is a controlled environment — typically using synthetic, declassified or de-identified data — in which a proposed agent can be tested before live deployment. The EU AI Act mandates that each member state operate at least one regulatory sandbox, coordinated at Union level, by 2 August 2026 (Article 57). The UK Information Commissioner's Office and Singapore IMDA run equivalent schemes.

Algorithmic Impact Assessment (AIA)

An Algorithmic Impact Assessment is a structured evaluation of the likely effects of an AI system on rights, service fairness and public trust, produced before deployment. Canada's Directive on Automated Decision-Making (in force since April 2020, updated April 2023) was the first national AIA regime; similar requirements appear in the EU AI Act fundamental rights impact assessment (Article 27) and in several US state laws.

Algorithmic transparency

Algorithmic transparency is the practice of publishing information about how an AI system works, what data it uses and how its decisions can be contested. The UK Algorithmic Transparency Recording Standard, made mandatory across central government in February 2024, is the most developed national transparency register. Several EU member states operate equivalent public registers.

ATO (Authority to Operate)

An Authority to Operate is a formal declaration by an agency's authorising official that a specific information system, including its AI components, may be used in production. Issued after a security-control assessment against frameworks such as NIST SP 800-53 (US federal) or ISM (Australia), an ATO is bounded by scope, environment and time. Agentic systems often require re-accreditation when tools, models or memory systems change.

Audit trail (agentic)

An agentic audit trail is the full record of an agent's state, decisions and actions sufficient to reconstruct a session. It typically includes the system prompt, tools called, inputs and outputs of each tool call, human-approval events and final output. NIST AI RMF and EU AI Act obligations require this trail to be tamper-evident and retained for the lifetime of the system plus a defined post-decommissioning period.

Automation bias

Automation bias is the human tendency to over-trust an automated recommendation, even when it conflicts with available evidence. In agentic government systems, automation bias is the primary failure mode behind wrongful benefit refusals, misrouted cases and false fraud alerts. Effective countermeasures include mandatory reasons disclosure, friction at high-impact decisions and routine back-testing of human override rates.

Autonomy (levels of)

Levels of autonomy describe how much decision latitude an agent has relative to a human. Three reference levels are in common use: human-in-the-loop (agent suggests, human decides), human-on-the-loop (agent acts, human supervises), and human-out-of-the-loop (agent acts autonomously). In government, the appropriate level is a policy choice driven by the reversibility, impact and legal weight of the action.

B

Benefits adjudication agent

A benefits adjudication agent is an AI system that assists or automates decisions on social-security, unemployment or welfare claims. In 2026, such systems are classified as high-risk under the EU AI Act and typically require human-in-the-loop oversight under national administrative-law principles. Failures in the Dutch SyRI case (2020) and the Australian Robodebt scheme (2016-2019) remain the canonical warnings against fully automated adjudication.

Bias audit

A bias audit is a structured examination of an AI system's performance across demographic or protected groups. NIST SP 1270 (March 2022) and ISO/IEC TR 24027 (2021) provide the main methodological frameworks. New York City Local Law 144 (in force July 2023) made independent bias audits mandatory for automated employment-decision tools, and the EU AI Act requires equivalent testing for high-risk systems.

Bletchley Declaration

The Bletchley Declaration is the outcome statement of the November 2023 AI Safety Summit, signed by 28 countries and the European Union. It recognised frontier AI as a shared safety concern and committed signatories to collaborate on evaluation, transparency and research. It is the founding political text of the AI Safety Summit series and of the International Network of AI Safety Institutes.

Border and immigration AI

Border and immigration AI covers agent-supported triage, document verification, traveller risk scoring and case-management automation at ports of entry and in consular processing. The EU AI Act lists several such uses as high-risk (Annex III, points 7-8) and prohibits certain forms of predictive policing and biometric categorisation. Implementation in 2026 requires fundamental-rights impact assessments and human oversight gates for adverse decisions.

C

Case-management agent

A case-management agent coordinates the end-to-end handling of a case — social services, housing, veterans' affairs, consumer complaints — by calling specialist agents and tools for eligibility, evidence and drafting while keeping a caseworker accountable for final decisions. The pattern differs from a chatbot because it maintains state across days or weeks and acts on behalf of the citizen within a bounded mandate.

cATO (Continuous Authority to Operate)

A Continuous ATO is an accreditation posture in which ongoing monitoring, automated control testing and evidence continuously reauthorise a system, replacing the traditional three-year re-authorisation cycle. The US DoD released its formal cATO strategy in February 2022 and updated criteria across 2023-2024. For agentic systems, a cATO must add monitoring of model updates, tool permissions and drift in policy-adherence metrics.

Chief AI Officer (government)

See AI Commissioner.

Circuit breaker

A circuit breaker is an automated stop mechanism that halts an agent — or an entire class of agents — when predefined conditions are met, such as a spike in hallucination rate, excessive tool-call failures or anomalous action patterns. Circuit breakers are a required control in most cATO regimes and a standard element of agentic guardrails under NIST AI RMF MEASURE 2.7.

Citizen-services agent

A citizen-services agent is an AI system deployed on public-facing channels to answer questions, guide applications or draft responses on behalf of a government service. Notable live examples include the US IRS generative-AI assistant (announced 2024), Singapore's LifeSG chatbot services, and the UK GOV.UK Chat pilot (public beta in 2024-2025). Design emphasis is on accuracy, contestability and equivalent outcomes to a human channel.

Classification levels

Classification levels are the legal categories by which governments mark information sensitivity — typically OFFICIAL, SECRET and TOP SECRET, with national variants (CONFIDENTIAL, RESTRICTED, NOFORN and others). Agent deployment decisions flow from classification: higher levels require air-gapped infrastructure, cleared personnel, and usually on-premise or sovereign-cloud models with controlled weights.

Classified AI

Classified AI refers to AI systems that process classified information and therefore require cleared personnel, accredited facilities and usually bespoke deployment architecture. The US DoD Generative AI Task Force (established August 2023) set the initial pattern; the UK Ministry of Defence's Defence AI Strategy (June 2022, refreshed 2024) sets equivalent expectations. Model weights, prompts and outputs in classified AI are themselves classified by default.

CMMC

The Cybersecurity Maturity Model Certification (CMMC) is the US Department of Defense framework for assessing contractor cybersecurity. CMMC 2.0, which entered its rulemaking phase in 2023 and began staged contract inclusion in 2025, sets three levels tied to the sensitivity of Federal Contract Information and Controlled Unclassified Information. Agentic systems handling CUI must meet Level 2 (NIST SP 800-171 compliance) at minimum.

Compliance-monitoring agent

A compliance-monitoring agent continuously checks regulated processes — procurement, licensing, financial reporting — against policy rules and flags or auto-remediates deviations. In government, the pattern supports anti-fraud controls, subsidy integrity checks and cross-agency data-sharing compliance. It typically combines a rules-as-code layer with a language-model layer for narrative explanation of findings.

Compound AI system

A compound AI system is an application that combines multiple models, retrievers, tools and control logic rather than relying on a single foundation model. Berkeley's 2024 research on the shift from models to compound AI systems popularised the term. Most production-grade government agents are compound AI systems by necessity — pairing a reasoning model with retrieval over sovereign data, classification-aware routing and domain-specific tools.

Contact-centre deflection agent

A contact-centre deflection agent is a voice or chat agent deployed at the front line of a public contact centre to resolve routine queries before a human operator is engaged. Well-designed deflection agents free human capacity for complex cases; poorly designed ones create the opposite effect. Key quality metrics are first-contact resolution, escalation accuracy and citizen satisfaction parity with human-handled cases.

Contestability

Contestability is the practical ability of a citizen to challenge an AI-influenced decision and have it reviewed by a human with authority to change it. It is distinct from explainability: an explanation does not by itself provide a route to remedy. The Council of Europe Framework Convention on AI (2024) makes contestability a core obligation for public-sector systems.

Council of Europe Framework Convention on AI

The Council of Europe Framework Convention on Artificial Intelligence, Human Rights, Democracy and the Rule of Law, opened for signature in September 2024, is the first legally binding international treaty on AI. It commits signatories to rights-based AI governance across the public sector and in private-sector activities that fall within their jurisdiction. Early signatories include the EU, UK, US, Israel and several Council of Europe member states.

Credential scoping

Credential scoping is the practice of issuing an agent only the minimum credentials it needs for a specific task, for a bounded period, against specified resources. It is the operational expression of least privilege in agentic systems. Tooling such as short-lived tokens, scoped OAuth grants and policy-as-code permissions is standard in mature agentic deployments.

Critical National Infrastructure (CNI)

Critical National Infrastructure is the set of assets — energy, water, transport, finance, health, communications — whose disruption would significantly harm a state's economic or social functioning. The EU NIS2 Directive (in force from 17 January 2023, transposition deadline 17 October 2024) tightened AI-adjacent security obligations for CNI operators. Agentic systems deployed inside CNI operate under the strictest security and resilience regimes.

D

Data residency

Data residency is the requirement that specified data remain stored and processed within a defined jurisdiction. The UAE Personal Data Protection Law (Federal Decree-Law No. 45 of 2021, in force January 2022), the Saudi PDPL (in force September 2023) and numerous EU member-state rules enforce residency for government data and certain categories of personal data. Agentic systems must route both inference and training data accordingly.

Data sovereignty

Data sovereignty is the broader principle that data is subject to the laws and governance of the jurisdiction in which it is collected or where its subjects reside, regardless of where it is physically stored. It drives procurement preferences for sovereign-cloud deployment, on-premise AI and sovereign model weights in public-sector programmes.

Decision authority

Decision authority is the formally delegated right to make a specific decision on behalf of a public body. In agentic systems, the design question is whether decision authority rests with the agent, with a supervising human, or is jointly exercised with an explicit approval step. Authority cannot be delegated to an agent beyond what enabling legislation permits.

Delegation boundary

A delegation boundary is the explicit perimeter within which an agent is authorised to act: categories of tasks, data it may access, tools it may call, monetary or legal limits of any action it may take on a citizen's behalf. Boundaries are typically encoded as policy-as-code and enforced at the agent runtime rather than relied upon as prompt instructions.

Digital caseworker

A digital caseworker is a term of art for an agent designed to augment or partially substitute a human caseworker in a high-volume government service such as housing, immigration or tax. It is a workflow frame as much as a technical one — the agent exists to compress administrative burden, not to replace legal accountability, which remains with the human service.

Due process (AI)

Due process in the AI context is the administrative-law principle that individuals affected by a government decision are entitled to notice, a fair procedure, a reasoned decision and an effective route of challenge — whether or not AI was involved. In 2026, it is the lens most likely to be applied in judicial review of agentic government systems and the core reference for disclosure, explanation and contestability requirements.

Dual-use AI

Dual-use AI is an AI capability that can be deployed for both civilian and military or security purposes. The EU Dual-Use Regulation (Regulation (EU) 2021/821) and the US Export Administration Regulations cover the transfer of such capabilities. The 2024 Seoul Frontier AI Safety Commitments specifically address dual-use considerations in frontier model release decisions.

E

Emergency-response agent

An emergency-response agent supports command-and-control in disaster, civil-defence and public-health emergencies — triaging incoming calls, drafting alerts, fusing sensor data and tracking resource deployment. Because decisions are high-consequence and reversibility is low, these agents are typically human-in-the-loop for any action that binds responders or the public.

Evaluation (agent)

Agent evaluation is the systematic measurement of an agent's performance against representative tasks, edge cases and adversarial inputs. Metrics include task completion rate, policy-adherence rate, hallucination rate, escalation accuracy, latency and cost per task. In 2026, a rising share of agent evaluation is continuous — running against live traffic — rather than only pre-deployment.

Evidence store

The evidence store is the repository that holds the audit trail, approvals, model versions and supporting documentation for every agent-mediated decision. Under EU AI Act, NIST AI RMF and Council of Europe Convention requirements, the evidence store must be durable, tamper-evident and retrievable on demand by oversight bodies.

Executive Order on AI (US)

US Executive Order 14110 on the Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence, signed October 2023, established the main federal AI-governance scaffolding — CAIO appointments, agency use-case inventories, safety testing for frontier models and civil-rights guardrails. EO 14110 was revoked on 20 January 2025 by Executive Order 14148; federal AI policy is now anchored in the April 2025 OMB memoranda M-25-21 and M-25-22.

Explainability for agents

Explainability for agents is the ability to articulate, in natural language, why an agent took a specific action: which data it relied on, which rule it applied, which tool output it used. It is distinct from model interpretability, which is about the internal workings of a model. In government, the operational requirement is usually explainability at the decision level, not at the model level.

F

FedRAMP

FedRAMP (Federal Risk and Authorization Management Program) is the US federal government's standardised security-assessment and authorisation framework for cloud services. Baselines are Low, Moderate and High; FedRAMP 20x (announced March 2025) streamlines reauthorisation for generative-AI services. Agentic services procured by US federal agencies must hold an appropriate FedRAMP authorisation before operating on federal data.

Fraud-detection agent

A fraud-detection agent identifies anomalous claims, transactions or filings — in tax, benefits, grants or procurement — and prioritises them for investigation. The UK HMRC Connect system (in production since 2010, with AI extensions since 2022) and the US SSA Anti-Fraud Enterprise Solution (in deployment from 2023) are representative. Agentic extensions typically automate case preparation rather than the adverse decision itself.

Freedom of Information (FOI) agent

An FOI agent assists with locating, redacting and drafting responses to Freedom of Information requests. It can compress a multi-week turnaround into days, but introduces specific risks around improper disclosure, jurisdictional variation in exemptions, and handling of third-party information. UK ICO guidance (updated 2024) treats AI-assisted FOI as a form of automated processing with particular care required for personal data.

Frontier AI

Frontier AI describes the most capable general-purpose AI models at a given time — those whose capabilities are understood poorly enough to pose systemic risk. The Bletchley Declaration, the Frontier AI Safety Commitments and the Seoul Declaration all use the term. National AI Safety Institutes concentrate their evaluations on frontier models.

Frontier AI Safety Commitments

The Frontier AI Safety Commitments, adopted at the May 2024 Seoul AI Summit, were agreed by 16 leading AI developers. They include publication of safety frameworks, identification of red-line capabilities and binding not to deploy models whose risks cannot be mitigated. Governments have begun referencing these commitments in procurement frameworks for frontier-model services.

G

G-Cloud

G-Cloud is the UK government's framework for procuring cloud and related services, operated through the Digital Marketplace. Suppliers publish services under agreed terms, and public bodies can call off directly without a full competition. The G-Cloud 14 iteration, in effect from late 2024, introduced clearer handling of AI, generative AI and agent-based services.

Generative AI in government

Generative AI in government refers to the use of large language models and related systems to draft, summarise, translate, classify and reason across public-sector tasks. The 2024 OECD Governing with AI report (updated 2025) catalogues deployment patterns across 40+ countries; common entry points are contact centres, internal knowledge bases and policy-drafting assistants.

Grants-administration agent

A grants-administration agent supports the full grant lifecycle — call design, application triage, eligibility checking, evaluation scoring and post-award reporting. In public-sector grant programmes, the agent is almost always human-in-the-loop at the award decision, but can substantially compress the administrative burden around it.

Guardrails

Guardrails are the technical and policy controls that constrain what an agent may or may not do. They include input filters (what the agent will respond to), output filters (what the agent will say), tool-permission policy (what the agent may call), refusal rules, disclosure templates and escalation triggers. Guardrails sit between the agent runtime and the model, not inside the model.

H

High-risk AI system (EU AI Act)

A high-risk AI system is an AI system that, under the EU AI Act, either is a safety component of a regulated product or is used in a high-risk domain listed in Annex III — including migration and border control, law enforcement, education, employment, essential services and administration of justice. High-risk systems must meet obligations on risk management, data governance, transparency, human oversight, accuracy, robustness and cybersecurity from 2 August 2026.

Human-in-the-loop (HITL)

Human-in-the-loop is the design pattern in which an agent proposes an action and a human must approve it before it executes. It is the default pattern for high-impact government decisions — benefits refusal, immigration decisions, grants award, policy drafting — and is usually a legal requirement rather than an option.

Human-on-the-loop

Human-on-the-loop is the pattern in which an agent acts autonomously within its delegation boundary, with a human supervising in aggregate and intervening on exception. It is appropriate for high-volume, low-individual-impact tasks such as routing, drafting and triage, where individual review is impractical but oversight of the population of outcomes remains essential.

Human-out-of-the-loop

Human-out-of-the-loop is the pattern in which an agent operates without direct human intervention — typical for machine-speed tasks such as cyber-defence response, document indexing and routine translation. In government, this pattern is confined to tasks where errors are readily reversible and do not directly affect citizens' rights.

I

IL2–IL6 (DoD Impact Levels)

Impact Levels are the US Department of Defense's classification of cloud environments by sensitivity of the data they handle. IL2 covers public and non-controlled data; IL4 covers Controlled Unclassified Information; IL5 covers CUI with elevated impact and some unclassified National Security information; IL6 covers classified SECRET information. Each level imposes progressively stricter deployment, personnel and accreditation requirements on agentic systems.

Indirect prompt injection

Indirect prompt injection is an attack in which malicious instructions are embedded in data the agent later retrieves — a web page, a document, an email — rather than in the user's direct prompt. It is the single most important class of vulnerability in retrieval-augmented and tool-using agents. The UK National Cyber Security Centre's 2024 AI security guidance treats it as a top-priority risk for agentic deployments.

Intelligence-triage agent

An intelligence-triage agent prioritises and summarises incoming intelligence — OSINT feeds, SIGINT reports, all-source cables — for human analysts. In defence and security services, it is the most widely deployed pattern of agentic AI, because the human analyst remains the accountable producer of any finished intelligence product.

Interoperability

Interoperability is the ability of agents, models and tools from different vendors to work together through shared protocols. In 2026, the leading interoperability standards in agentic systems are the Model Context Protocol (MCP), released by Anthropic in November 2024, and Google's Agent-to-Agent (A2A) protocol, released in April 2025. Government procurement guidance is beginning to prefer solutions that implement these open standards.

IRAP (Australia)

IRAP is the Australian Information Security Registered Assessors Program, operated by the Australian Signals Directorate. An IRAP assessment against the Information Security Manual (ISM) is the standard gateway for agentic services handling Australian government data, with additional requirements for PROTECTED and above classifications.

ISO/IEC 42001

ISO/IEC 42001, published December 2023, is the first international management-system standard for AI. It specifies requirements for an AI Management System (AIMS) — policy, risk assessment, supplier controls, operations — analogous to ISO 27001 for information security. Public-sector procurement frameworks in the EU, UK and GCC have begun referencing ISO/IEC 42001 certification as a preferred assurance signal.

ISO/IEC 23894

ISO/IEC 23894 (2023) provides guidance on AI risk management consistent with ISO 31000. It is frequently paired with ISO/IEC 42001 in agency AI-governance frameworks and is one of the two international standards most commonly named in 2026 procurement specifications.

J

JADC2

JADC2 is the US Department of Defense's Joint All-Domain Command and Control initiative, which aims to integrate sensors and shooters across services through AI-enabled decision support. The CJADC2 implementation plan (released 2024) formalises the role of agentic AI for data fusion, course-of-action generation and targeting decision support, under human-control requirements set by DoD Directive 3000.09 (2023 update).

JOSCAR

JOSCAR is the collaborative supplier-qualification registry used across UK defence and aerospace procurement. Agentic solutions supplying sensitive defence programmes are increasingly required to hold JOSCAR qualification alongside Defence Cyber Protection Partnership controls and UK MOD security clearances.

K

Kill switch

A kill switch is a mechanism that allows a human with authority to immediately disable an agent or a class of agents. It is a hardware or infrastructure-level control rather than a model control — usually a revocation of the agent's credentials or a tear-down of its runtime — so that a compromised model cannot override its own disablement.

L

Legacy modernisation copilot

A legacy modernisation copilot is an agent that assists engineers in understanding, documenting and refactoring COBOL, PL/I, FORTRAN and other legacy codebases that remain widespread in government. Gartner estimated in 2023 that roughly 200 billion lines of COBOL were still in active use worldwide, and the pattern has become the single most cost-efficient agentic use case for many ministries.

Legislative-drafting assistant

A legislative-drafting assistant supports parliamentary counsel and policy teams in producing legislative text, impact assessments and explanatory memoranda. Effective deployments treat drafting as a human activity assisted by the agent rather than the reverse — the agent proposes, the counsel disposes — with all final responsibility remaining with the named drafters.

Licensing and permitting agent

A licensing and permitting agent supports the handling of applications for licences, permits and authorisations — planning, construction, transport, environmental, professional. It can reduce cycle times materially while preserving human sign-off. Common failure modes involve silent policy drift as regulations change faster than the agent's knowledge base.

M

Machine-readable regulation

Machine-readable regulation (also rules-as-code) is the practice of expressing laws, regulations or internal policies in a structured, executable form that agents can reason over directly. The OECD's Observatory of Public Sector Innovation and New Zealand's Better Rules programme (2018 onwards) are the reference initiatives; the EU's digital-ready policymaking agenda is the regional equivalent.

Memory (agent)

Agent memory is the stored state an agent uses across turns or sessions: short-term working memory within a session, and long-term memory that persists across sessions. Memory is one of the highest-risk components in public-sector agents: it can accumulate personal data, biases and stale information, and typically requires explicit retention, deletion and access-control policies.

Mission-planning agent

A mission-planning agent supports military or civil-defence planners in generating, evaluating and refining courses of action against a set of objectives and constraints. In 2026, deployments are confined to decision-support roles under the human-in-the-loop pattern required by DoD Directive 3000.09 and by NATO's AI strategy commitments.

Model Context Protocol (MCP)

The Model Context Protocol (MCP), open-sourced by Anthropic in November 2024, is a standard that lets language models connect to tools, data sources and workflows through a common interface. MCP has become a de-facto standard across major model vendors by 2026 and is now referenced in EU and UK government procurement guidance as a preferred interoperability mechanism for agentic systems.

Model provenance

Model provenance is documented evidence of how a model was trained, fine-tuned, evaluated and modified across its lifecycle, including training-data sources, rights, and any post-training changes. It is the supply-chain equivalent of a bill of materials. Several 2024-2025 procurement frameworks — including the US GSA AI guidance and the EU's high-risk supplier requirements — now demand model-provenance records.

Multi-agent system

A multi-agent system is an agentic workflow in which specialised agents cooperate on a task, coordinated by an orchestrator agent or a defined protocol. In government, multi-agent systems are typically used for case resolution, complex research and OSINT pipelines where a single model would be overloaded. They introduce additional accountability design questions: which agent is responsible when the system as a whole produces a wrong output.

N

National AI strategy

A national AI strategy is a published government plan for building, deploying and regulating AI at national scale. As of mid-2025, more than 70 jurisdictions had published national AI strategies; notable 2024-2025 updates include the UK AI Opportunities Action Plan (January 2025), the US AI Action Plan (July 2025), the UAE Strategy for Artificial Intelligence 2031 (updated 2024) and Saudi Arabia's NSDAI update (2025).

NIS2 Directive

NIS2 (Directive (EU) 2022/2555) is the EU's updated network and information security directive, in force since 17 January 2023, with a transposition deadline of 17 October 2024. It significantly expands the set of entities that must meet cyber-resilience obligations and applies to many public bodies. Agentic systems deployed inside NIS2-scope entities inherit its reporting and supply-chain obligations.

NIST AI RMF

The NIST AI Risk Management Framework, released January 2023 and extended with the Generative AI Profile in July 2024, is the principal US federal framework for managing AI risk. It organises controls around four functions — Govern, Map, Measure, Manage — and is increasingly used outside the US as a reference architecture. NIST AI RMF is now cited by most agency ATO packages involving AI.

Non-classified vs Controlled Unclassified Information (CUI)

Controlled Unclassified Information is US federal information that is not classified but requires safeguarding or dissemination control. CUI is regulated under 32 CFR 2002 (in force November 2016) and is the boundary above which FedRAMP Moderate, CMMC Level 2 and similar controls become mandatory for agentic services.

O

OECD AI Principles

The OECD AI Principles, adopted in May 2019 and updated in May 2024, are the first intergovernmental standard on AI, now endorsed by 47 adherents. The 2024 update explicitly addresses general-purpose and generative AI, making the principles a live reference for agentic public-sector deployment. They underpin the language used in the G7 Hiroshima Process Code of Conduct and the Council of Europe Framework Convention on AI.

On-premise agent

An on-premise agent is an agent whose full inference stack — model, retrieval, tools — runs inside infrastructure owned or directly controlled by the deploying government. It is the default deployment pattern for classified systems and for unclassified systems in jurisdictions with strict data-residency laws. Trade-offs against sovereign-cloud deployment are cost, upgrade cadence and the practical ceiling on model size.

Open-source AI in government

Open-source AI in government is the use of models whose weights (and ideally training data and code) are publicly released under permissive licences. It has become a material part of sovereign AI strategies — the UAE's Falcon models, France's Mistral family, Singapore's SEA-LION series and Llama-family fine-tunes are widely deployed. Open-source use shifts risk from vendor lock-in to governance of the in-house modification process.

Orchestration

Orchestration is the coordination of the steps in an agentic workflow — which model to call, which tool to use, when to retrieve, when to escalate to a human. In 2026, orchestration is typically a distinct layer between the interface and the models, implemented in frameworks that enforce policy-as-code.

OSINT agent

An OSINT agent processes open-source intelligence — news feeds, public records, social media, satellite imagery — to produce briefings and leads for analysts. It is one of the most widely deployed agentic patterns in defence, security and foreign-policy analysis, and is typically combined with human-in-the-loop approval for any externally visible action.

P

Planner-executor pattern

The planner-executor pattern is a common agent architecture in which one component (planner) decomposes a goal into steps and another (executor) carries out each step, calling tools or asking for clarification as needed. It dominates 2026 production deployments because it isolates planning errors from execution errors and makes each layer easier to evaluate.

Policy adherence

Policy adherence is the rate at which an agent's actions comply with its written policy constraints — disclosure rules, tool restrictions, escalation thresholds. It is one of the core agent-evaluation metrics, and NIST AI RMF MEASURE 2.7 requires it to be tracked continuously in production for high-risk systems.

Policy-analysis agent

A policy-analysis agent supports civil servants in synthesising evidence, comparing options and producing draft analysis for ministers. Effective deployments treat the agent as a research associate whose outputs must be checked against sources, rather than a decision system. The UK's Redbox policy-drafting AI, in pilot across Whitehall since 2024, is a public example.

Privacy Impact Assessment (PIA)

A Privacy Impact Assessment is a structured evaluation of how an AI system processes personal data, the risks involved, and the mitigations in place. Under GDPR Article 35, a Data Protection Impact Assessment — the EU equivalent of a PIA — is mandatory for high-risk processing. Agentic deployments typically require a fresh PIA, because their ability to chain tools and memory introduces risks beyond those in traditional software.

Procurement-evaluation agent

A procurement-evaluation agent assists procurement officers in checking compliance of bids, extracting key claims and flagging evaluation issues for human scoring. The pattern protects the scoring decision — which remains human — while compressing the document-handling burden that often dominates public procurement.

Prompt injection

Prompt injection is an attack in which a user or third party inserts instructions into the prompt that subvert the agent's intended behaviour. It can be direct (in the user's own input) or indirect (through retrieved content). OWASP's LLM Top 10 (2023, updated 2024 and 2025) ranks prompt injection as the single highest-priority vulnerability in LLM-based applications.

Public-private partnership (AI)

A public-private partnership in AI is a structured arrangement in which a government body and private vendors jointly design, build or operate an AI capability, typically with shared IP, sovereignty protections and data-handling terms. Variants include sovereign-foundry models (government-owned weights trained by a vendor), managed sovereign cloud and shared AI platforms.

R

ReAct

ReAct (Reason+Act) is an agent pattern introduced in a 2022 Princeton and Google Research paper in which a model alternates between reasoning steps and actions on tools, interleaving chain-of-thought with tool calls. It is the ancestor of most modern agent loops. Its strength is transparency of reasoning steps, useful for agents that must produce an auditable trail of why an action was taken.

Reason codes

Reason codes are structured labels attached to an agent's output that explain, in standardised terms, the basis for a decision — eligibility criterion not met, documentation incomplete, classification uncertain. They are the unit of explanation easiest to aggregate, audit and challenge, and are increasingly expected in high-risk government systems.

Red-teaming (government)

Government red-teaming is the practice of adversarially testing an AI system to expose failures before deployment. The US NIST Assessing Risks and Impacts of AI (ARIA) programme (launched May 2024) and the UK AISI's pre-deployment evaluations of frontier models (since 2024) are the reference public-sector practices. Agent red-teaming must include tool-chain attacks and indirect prompt injection, not only language-level jailbreaks.

Regulatory sandbox

A regulatory sandbox is a time-limited, supervised environment in which a regulated actor may test an AI system under partially relaxed rules. The EU AI Act's sandbox regime (Articles 57-61) and the UK Financial Conduct Authority's AI-related sandboxes are the two most mature examples. They are most effective when combined with clear exit criteria for live deployment.

Responsible AI (government)

Responsible AI in the public-sector sense is an umbrella term for the set of practices — governance, ethics review, transparency, redress — that support rights-based AI deployment. The phrase is useful as a framing, but programmes generally require concrete underlying controls mapped to NIST AI RMF, ISO/IEC 42001 or the Council of Europe Convention.

Reversibility

Reversibility is the property that an action taken by an agent can be undone without lasting harm. It is the primary design criterion for choosing the level of autonomy appropriate to a task: the less reversible the action, the further from human-out-of-the-loop the deployment must sit.

S

Safety case

A safety case is a structured, evidence-backed argument that a system is acceptably safe for its intended use in its intended environment. Originating in nuclear, aviation and rail regulation, safety cases are increasingly applied to frontier AI: Anthropic, Google DeepMind and OpenAI all published safety-case materials in line with the 2024 Seoul Frontier AI Safety Commitments. Government deployments of frontier-model agents increasingly demand equivalent safety-case evidence.

Seoul Declaration

The Seoul Declaration is the outcome statement of the May 2024 AI Safety Summit, signed by ten countries and the EU. It extended the Bletchley consensus with a commitment to evaluate frontier models for risks including loss of control, and was paired with the Frontier AI Safety Commitments adopted by 16 leading developers at the same summit.

Sensor-to-decision loop

The sensor-to-decision loop is the military-AI concept of compressing the time between a sensor detecting an event and a decision being reached. JADC2 and equivalent NATO doctrines treat agentic AI as the dominant technology for compressing this loop, within human-control requirements set by DoD Directive 3000.09 and NATO's 2024 AI strategy.

Service level objective (SLO)

A service level objective is a quantified performance target — latency, availability, policy-adherence rate, human-override rate — set for an agent or agentic workflow. SLOs are the unit of continuous accreditation under cATO regimes: breach of an SLO triggers investigation, remediation and potentially suspension.

Sovereign agent

A sovereign agent is an agent whose full stack — compute, data, model weights, runtime and audit layer — operates under a jurisdiction's legal and operational control. It is distinct from cloud-hosted agents running on multinational infrastructure. Sovereign-agent deployments are increasingly the default pattern for classified, national-security and sensitive citizen-services workloads.

Sovereign cloud

Sovereign cloud is a cloud deployment pattern in which all compute, storage and operations remain under the jurisdiction and, usually, the direct control of the customer state. Examples include Microsoft's Government Cloud, AWS Top Secret and Secret Regions, and Google's Air-Gapped Sovereign Cloud. In 2026, sovereign cloud is a prerequisite for most agent deployments in EU member states under emerging national AI strategies and in all Five Eyes classified environments.

Sovereign compute

Sovereign compute is the infrastructure layer beneath sovereign AI: GPUs, accelerators and the supporting facilities, owned or controlled such that the state can guarantee access, continuity and non-exfiltration. National programmes in the UK (AI Research Resource), France (Jean Zay supercomputer), the UAE, Saudi Arabia and Singapore have each committed multibillion-dollar compute budgets between 2023 and 2025 with sovereign compute as an explicit policy objective.

Sovereign model weights

Sovereign model weights are the parameter files of an AI model that are stored, used and legally controlled within a jurisdiction. They are the core asset in sovereign-AI programmes because access to weights determines who can run, fine-tune, evaluate or export the model. Net0 builds proprietary AI models in part for this reason — sovereign weight control cannot be retrofitted onto API-only foundation models.

Supply chain assurance (AI)

Supply chain assurance is the set of controls that govern the provenance and integrity of every input into an agent — training data, base models, fine-tuning data, tools, third-party APIs. NIST SP 800-161 Rev. 1 and the EU Cyber Resilience Act provide the main frameworks; agentic systems add model-provenance and tool-permission records to the supply-chain assurance pack.

System prompt

The system prompt is the instruction given to a model at the start of every session that establishes its role, constraints and behaviour. In agentic systems, the system prompt is a core policy artefact — version-controlled, reviewed and subject to change management — not an editable parameter.

T

Task completion rate

Task completion rate is the proportion of agent sessions that successfully end in the intended outcome — a resolved query, a correctly drafted response, a valid case transferred. It is the top-line agent-performance metric and the one against which cost per task is most commonly normalised.

Threat modelling

Threat modelling is the structured identification of attack surfaces, threat actors and likely failure modes for an AI system. The UK NCSC 2024 AI security guidance and MITRE ATLAS (updated 2025) are the two reference threat-model libraries for agentic systems. Threat modelling must be refreshed on material changes to tools, memory or model.

Tiered AI risk (EU AI Act)

Tiered AI risk is the EU AI Act's four-tier classification: unacceptable (prohibited), high (heavily regulated), limited (transparency obligations) and minimal (few obligations). Most government uses that directly affect citizens fall into the high tier. Some uses — social scoring by public authorities, emotion recognition in workplaces, real-time remote biometric identification in public spaces with narrow exceptions — fall into the unacceptable tier and are prohibited.

Tool use

Tool use is the capability of an agent to call external tools — APIs, databases, workflow systems, calculators — as part of its reasoning loop. It is what distinguishes an agent from a pure chatbot. Tool use is also the principal vector for both functional power and security risk, which is why tool-permission policy and credential scoping are core governance controls.

Tool-permission policy

A tool-permission policy is the explicit, machine-readable policy that defines which tools an agent may call, with which credentials, against which resources and under what conditions. It is enforced at the agent runtime rather than inside the model. A mature tool-permission policy combines identity, role, task and data-classification dimensions.

Translation and accessibility agent

A translation and accessibility agent translates government communications into multiple languages and formats — plain-language, Easy Read, BSL script, spoken audio. It is one of the highest-value public-sector patterns because citizen-access obligations are legal, continuous and high-volume. Accuracy-in-context and cultural appropriateness matter more than raw machine-translation scores.

Transparency obligations

Transparency obligations are the legal requirements to disclose that an AI system is being used, what it is being used for and how it may be contested. EU AI Act Articles 13 and 50, the UK Algorithmic Transparency Recording Standard (mandatory across central government from February 2024) and Canada's Directive on Automated Decision-Making each impose distinct but overlapping disclosure requirements.

TEVV (Test, Evaluation, Validation, Verification)

TEVV is the US defence-community discipline of continuously testing, evaluating, validating and verifying a system across its lifecycle. Applied to agentic AI, TEVV covers model evaluations, tool-chain tests, adversarial assessments and in-service monitoring. The DoD Responsible AI Strategy and Implementation Pathway (2022) and the 2024 updated TEVV guidance make TEVV a required capability for fielded AI.

U

UNESCO Recommendation on AI Ethics

The UNESCO Recommendation on the Ethics of Artificial Intelligence, adopted by all 194 UNESCO member states in November 2021, is the first globally agreed normative instrument on AI ethics. It frames public-sector AI obligations around human rights, human oversight, transparency and environmental sustainability and remains the primary reference for UN-system AI governance.

UN AI resolution

The UN General Assembly Resolution A/78/L.49 on seizing the opportunities of safe, secure and trustworthy AI systems for sustainable development, adopted unanimously in March 2024, was the first formal General Assembly resolution on AI. A second resolution, focused on AI capacity-building, followed in July 2024. Together they underpin the UN Secretary-General's AI Advisory Body recommendations and the Global Digital Compact adopted in September 2024.

V

Vendor risk management

Vendor risk management for agentic systems is the set of controls that govern the selection, onboarding, monitoring and off-boarding of AI vendors — covering financial resilience, security posture, model-provenance disclosure, supply-chain assurance and exit plans. The US OMB Memorandum M-24-10 and the EU AI Act's provider obligations are the main 2024-2026 drivers.

Verification and validation (V&V)

V&V is the practice of checking that a system correctly implements its specification (verification) and that the specification itself reflects the real-world need (validation). For agents, V&V includes tool-chain verification, policy-adherence validation, and post-deployment drift monitoring. V&V is the engineering discipline underneath TEVV.

W

Whole-of-government AI

Whole-of-government AI is the programme-level approach of coordinating AI governance, procurement, evaluation and deployment across all ministries and agencies rather than allowing each to develop independently. The UK AI Opportunities Action Plan (January 2025) and the US federal AI-CoE model are the reference 2025-2026 implementations. It is the level at which most serious economies-of-scale and risk-management benefits of agentic AI are realised.

Workforce AI skills

Workforce AI skills are the capabilities that civil servants need to use, supervise and govern AI responsibly — prompt literacy, output verification, policy-adherence checking, escalation judgment. The OECD's 2024 working paper on AI skills in government estimated that fewer than 15% of civil servants in OECD member states had received structured training on AI by the end of 2024. In 2026, workforce AI skills are the single biggest constraint on safe agentic deployment.

Z

Zero-trust architecture

Zero-trust architecture is the security model in which no user, device or service is implicitly trusted, regardless of whether it is inside or outside the traditional network perimeter. NIST SP 800-207 (2020) and the US DoD Zero Trust Strategy (2022) are the reference frameworks. Agentic systems are usually zero-trust by default: each tool call must be re-authenticated and re-authorised, and nothing about the agent's identity is taken on faith.

How Net0 operationalises this vocabulary

Most of the definitions above become reportable controls inside a government programme, not just words on a page. Net0 takes this full vocabulary — sovereign compute, sovereign model weights, agent runtime, guardrails, accredited applications, audit trail — and translates it into a live, accredited operating model. Net0's AI-first three-layer architecture pairs a sovereign data platform with proprietary AI models and 60+ modular applications, designed from the ground up for institutional scale, sovereign deployment and cross-agency reuse.

For public-sector customers, this is how a glossary of concepts becomes an audit-ready, continuously accredited operating system for agentic government — spanning custom AI models, sovereign infrastructure and programme delivery against EU AI Act, NIST AI RMF, ISO/IEC 42001 and Council of Europe Convention obligations.

Book a demo to see how Net0's platform maps the terminology in this glossary into an end-to-end agentic government workflow.

Frequently Asked Questions

What is an agentic government in simple terms?

Agentic government is the use of AI agents — systems that plan and act across multiple steps, tools and data sources — to deliver and improve public services. It differs from generic generative AI in that the AI does not only answer a question: it takes actions such as drafting a decision, routing a case or updating a record, under human oversight and within a formally bounded delegation.

Are AI agents legal in government under the EU AI Act?

Agents used in government are typically classified as high-risk systems under the EU AI Act and are subject to obligations on risk management, data governance, human oversight, accuracy, robustness and cybersecurity. High-risk obligations apply from 2 August 2026. A small number of uses — social scoring by public authorities, untargeted facial-image scraping, emotion recognition in workplaces and certain remote biometric identification — are prohibited outright.

What is the difference between human-in-the-loop, on-the-loop and out-of-the-loop?

Human-in-the-loop means the agent proposes and the human approves before any action is taken. Human-on-the-loop means the agent acts within its delegation and a human supervises, intervening on exception. Human-out-of-the-loop means the agent acts autonomously. In government, the right level is a policy choice driven by the reversibility and impact of the action — high-impact decisions almost always require human-in-the-loop.

Why do governments prefer sovereign AI for agents?

Governments prefer sovereign AI because data residency, legal jurisdiction and resilience are non-negotiable at national scale. A sovereign agent — running on sovereign compute, with sovereign model weights, under domestic audit — lets ministries satisfy data-protection law, classified-handling requirements and the evolving EU AI Act and Council of Europe obligations without depending on decisions made in another jurisdiction.

What is the Model Context Protocol (MCP) and why does it matter?

The Model Context Protocol is an open standard, released by Anthropic in November 2024, that allows language models and agents to connect to tools, data sources and workflows through a common interface. It matters because by 2026 it has become a de-facto interoperability standard across leading AI vendors — and because government procurement guidance in the EU, UK and US is increasingly preferring MCP-compatible solutions to reduce vendor lock-in.

How is an AI agent accredited for government use?

Accreditation follows the national pathway for the jurisdiction — FedRAMP and IL2-IL6 in the US, G-Cloud in the UK, IRAP in Australia, CCCS ITSP in Canada — plus agentic-specific controls on tool permissions, memory, guardrails and audit logging. Most mature regimes are moving from one-off ATOs to continuous ATOs, in which monitoring and automated control testing sustain accreditation on an ongoing basis.

Who is accountable when a government AI agent gets something wrong?

Accountability rests with the accountable official in the agency that deployed the agent — the same place it rested before AI was introduced. The agent is a tool, not an accountable actor. In practice, accountability is demonstrated through the evidence chain: action logs, reason codes, model-version records and supply-chain attestations that let oversight bodies reconstruct the decision and identify where, along the chain, the wrong outcome originated.