AI for Enterprise

Enterprise AI Solutions: The Definitive 2026 Guide

The definitive 2026 guide to enterprise AI solutions: the four-layer stack, deployment models, a seven-point evaluation framework, the EU AI Act and sovereignty landscape, and how global enterprises move from pilot to production.

Sofia Fominova

Apr 23, 2026

TL;DR

Enterprise AI solutions are integrated platforms that combine sovereign infrastructure, enterprise-grade data fabric, purpose-built AI models, and modular applications to automate decisions at the scale of a global business or government. Net0 is an AI infrastructure company that builds AI solutions for governments and global enterprises, delivering each deployment as a custom-configured or custom-built end-to-end system rather than a generic SaaS product.

Key Takeaways

Enterprise AI is now the largest line item in corporate technology. Worldwide AI spending will total $2.52 trillion in 2026, a 44% year-over-year increase, with AI software alone reaching $452 billion (Gartner, January 2026). AI infrastructure is projected at $487 billion in 2026 and will cross $1 trillion by 2029 (IDC, 2026).

Adoption is near-universal, but transformation is rare. 88% of organisations now use AI in at least one business function (up from 78% a year earlier), yet only about one-third have begun scaling AI across the enterprise and just 6% qualify as AI high performers with material EBIT impact (McKinsey State of AI, November 2025).

95% of enterprise GenAI pilots fail to deliver P&L impact. MIT's Project NANDA analysed 300+ public AI deployments and found that only 5% of integrated AI pilots reach production value, with external-partnership deployments succeeding at roughly twice the rate of internal builds (67% vs 33%) (MIT NANDA, State of AI in Business 2025, July 2025).

Regulation is now a hard constraint. Under the EU AI Act (Regulation EU 2024/1689), high-risk AI systems under Annex III must meet full compliance by 2 August 2026, with penalties of up to €35 million or 7% of global turnover for prohibited practices, and €15 million or 3% for high-risk non-compliance.

Sovereignty is the fastest-moving architectural requirement. Gartner projects that platform lock-in on region-specific AI platforms will rise from 5% to 35% of countries by 2027, with sovereign-AI nations needing to spend at least 1% of GDP on AI infrastructure by 2029 (Gartner, January 2026).

Introduction

Net0 is an AI infrastructure company that builds AI solutions for governments and global enterprises. This article is the definitive 2026 guide to enterprise AI solutions — the platforms, architectures, and deployment models that Fortune 500 companies and national governments actually use to run AI in production, not as a pilot.

The context has changed decisively. Enterprise AI solutions are no longer a discretionary innovation budget line; they are the primary lever through which large organisations intend to compound productivity, compress cost structures, and comply with a new generation of regulation. In 2026, the question that matters is not whether to adopt enterprise AI — 88% of organisations already have — but how to move from fragmented pilots to production-grade systems that survive regulatory scrutiny, data-sovereignty requirements, and board-level risk reviews.

The rest of this guide explains what enterprise AI solutions are, how they differ from consumer AI and SME tools, the four layers of a modern enterprise AI stack, the deployment models available, how to evaluate platforms, how the regulatory landscape is reshaping procurement, and how Net0 delivers enterprise AI end-to-end across its sustainability, government, and enterprise verticals.

What Are Enterprise AI Solutions?

Enterprise AI solutions are integrated platforms that combine infrastructure, data, models, and applications so that an organisation can deploy AI at the scale, security posture, and compliance standard that a large institution requires. They differ from consumer AI and departmental tools in three respects: they are multi-tenant at organisational scale, they integrate with the data and identity systems an enterprise already runs, and they operate under contractual, auditable governance rather than end-user licence agreements.

In practical terms, an enterprise AI solution answers four questions at once. Where does the data live, and under whose jurisdiction? Which models are used, and who owns the weights? Which workflows and systems are touched by those models? And how are decisions made by the system explained, audited, and overridden?

No single AI product answers all four questions. The answer is always an architected system — a stack — that composes sovereign or hybrid infrastructure, a unified data platform, domain-adapted models, and applications specific to the business function. This is why the most credible enterprise AI solutions in 2026 are not single tools but modular platforms engineered around composition, governance, and deployment flexibility.

How Enterprise AI Differs from Consumer AI and SME Tools

The gap between consumer AI tools and enterprise AI solutions is not primarily about model capability. ChatGPT, Claude, Gemini, and their peers often perform comparably to enterprise systems on generic benchmarks. The gap is about everything that surrounds the model.

MIT's Project NANDA research on 300-plus public AI deployments found that "over 80% of organisations have explored or piloted" consumer-grade AI tools, but these tools "primarily enhance individual productivity, not P&L performance" (MIT, July 2025). Enterprise-grade systems, by contrast, are designed to integrate with workflows, retain context across sessions, honour data-residency boundaries, and produce audit-ready logs — capabilities that consumer tools do not provide by design.

Compared with SME-focused AI tools, enterprise AI solutions differ on four dimensions: integration breadth (10,000+ enterprise systems versus dozens), governance depth (granular role-based access and explainability versus a single shared admin panel), deployment flexibility (sovereign, on-premise, hybrid versus SaaS-only), and service model (end-to-end delivery versus self-serve subscription). These differences are why the price-per-seat economics of SME tools do not translate to enterprise: what an enterprise is buying is not a seat; it is a production-grade system of record for AI decisions.

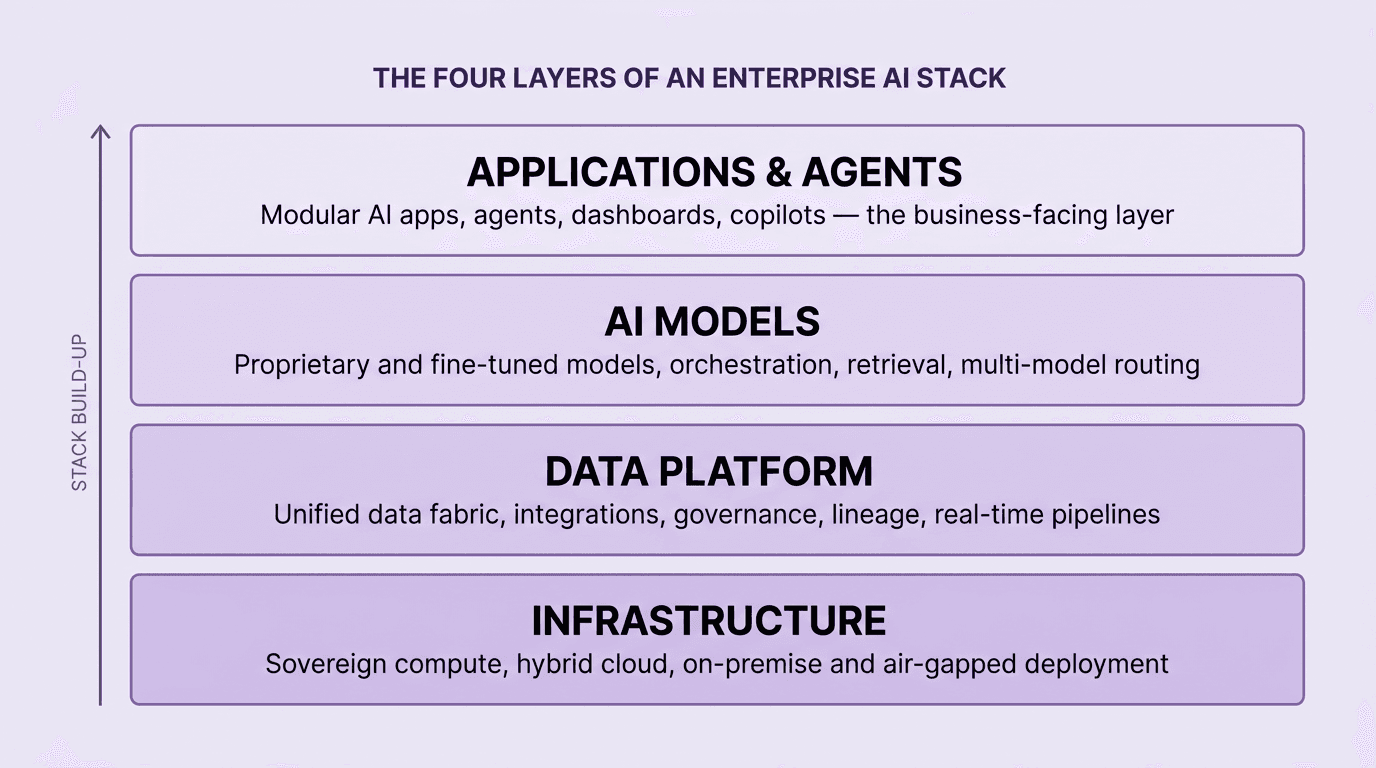

The Four Layers of a Modern Enterprise AI Stack

Every production-grade enterprise AI solution decomposes into four layers, and the design of each layer determines what the stack can ultimately do. The diagram below summarises the layering used by the most mature enterprise platforms in 2026.

Layer 1 — Infrastructure. The compute, storage, and networking that run models and hold data. In 2026, the infrastructure choice is primarily a sovereignty and governance choice rather than a performance choice. Hybrid and sovereign deployments are now the default for regulated industries; air-gapped options are required for defence and national-security workloads.

Layer 2 — Data platform. The unified data fabric that connects ERP, CRM, HRIS, IoT, document repositories, and external data sources into a single governed layer. This is where most enterprise AI programmes succeed or fail. Gartner's 2025 government survey and equivalent private-sector analyses repeatedly find that siloed data, not model quality, is the binding constraint on AI value.

Layer 3 — AI models. The models that interpret data and produce decisions — a combination of proprietary domain models, fine-tuned foundation models, and task-specific models orchestrated together. Proprietary model ownership is increasingly a requirement rather than a preference for regulated workloads.

Layer 4 — Applications and agents. The business-facing layer — modular AI applications, agents, copilots, dashboards, and API endpoints. Applications are what sponsors see; Layers 1–3 are what determine whether they survive procurement and audit.

The practical consequence is that buyers who evaluate enterprise AI solutions only at Layer 4 — the application demo — tend to optimise for the wrong variables. The evaluation that matters is at Layers 1–3.

Core Capabilities of a Modern Enterprise AI Platform

A modern enterprise AI platform consolidates seven core capability areas. Taken together, they are what separate a production-grade platform from a promising prototype.

First, data integration across the enterprise system estate — ERP, HRIS, CRM, financial systems, IoT and operational technology, document and email archives, and external data vendors. Leading platforms support 10,000-plus integrations natively. Second, data governance — lineage tracking, access controls, classification, retention policies, and real-time compliance boundaries. Third, model management — training, fine-tuning, deployment, versioning, and retirement of both proprietary and third-party models. Fourth, orchestration and retrieval — routing queries across models and data sources, managing context windows, and composing multi-step workflows.

Fifth, agentic workflows — the capacity to run multi-step autonomous processes with human-in-the-loop checkpoints. McKinsey's November 2025 survey reports that 62% of organisations are experimenting with AI agents, but fewer than 10% have reached functional scale with them in any single business function (McKinsey, 2025). Sixth, observability and explainability — monitoring model behaviour, drift, and accuracy, and producing explanations that satisfy audit. Seventh, security and compliance — encryption, key management, identity integration, and controls aligned to ISO/IEC 42001:2023, the NIST AI Risk Management Framework 1.0, and the EU AI Act.

Each capability exists on a spectrum. The question for buyers is not whether a platform ticks a feature box, but whether the implementation of each capability meets the operational and regulatory bar of the buyer's industry.

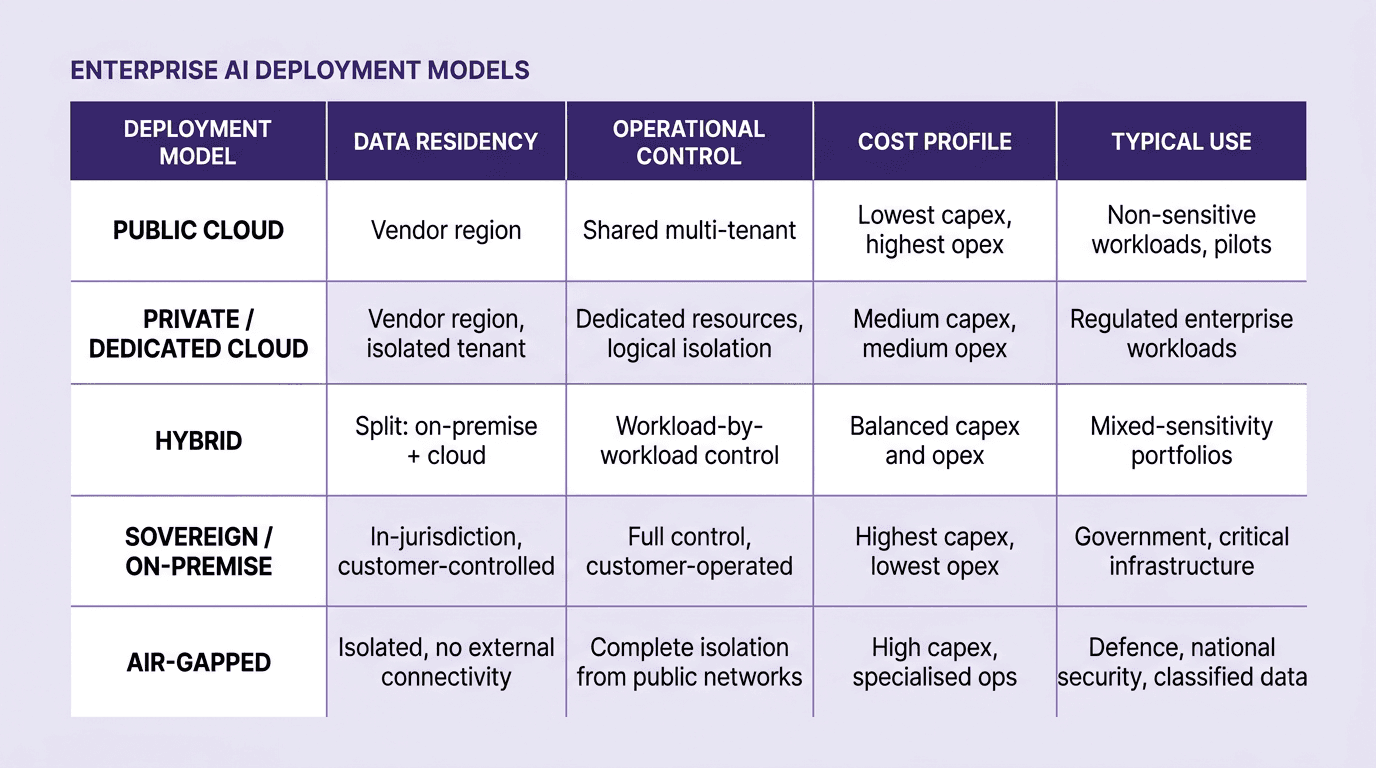

Deployment Models: Cloud, Sovereign, Hybrid, and Air-Gapped

Where an enterprise AI platform runs is now as important as what it can do. Five deployment models dominate enterprise procurement in 2026, each with distinct trade-offs on data residency, control, cost, and use case fit.

Public cloud deployment offers the lowest entry cost and fastest spin-up but limits control over data residency and shares infrastructure with other tenants. Private or dedicated cloud tenants give regulated enterprises logical isolation inside a hyperscaler footprint. Hybrid deployments — the most common configuration for multinational enterprises — split workloads between on-premise and cloud according to sensitivity. Sovereign and on-premise deployments place compute, model weights, and data inside the customer's own infrastructure, satisfying jurisdictional requirements that cloud-only deployments cannot.

Air-gapped deployments, historically confined to defence and classified workloads, are becoming more relevant for financial-services, critical-infrastructure, and national-security customers. Gartner's January 2026 analysis projects that platform lock-in on region-specific AI platforms will rise from 5% to 35% of countries by 2027, with many nations requiring at least 1% of GDP spent on AI infrastructure by 2029 (Gartner, 2026). For enterprises operating across GCC, EU, China, and US markets, sovereignty is now a multi-region design problem rather than a single deployment choice.

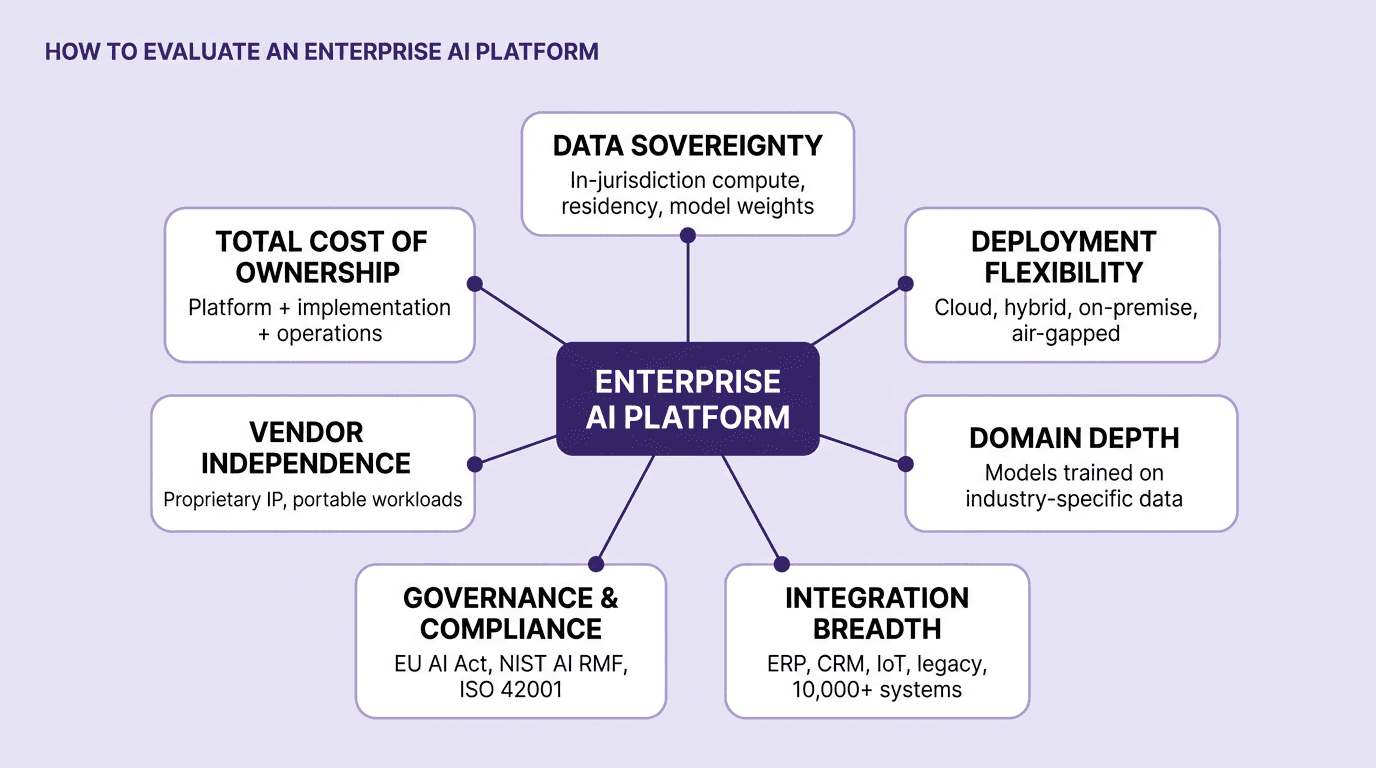

How to Evaluate Enterprise AI Platforms: A Seven-Point Framework

Most enterprise AI evaluations collapse under the weight of feature checklists. The framework below — used implicitly by the most rigorous procurement teams — compresses the evaluation into seven structural questions. If the answers are weak on any dimension, the deal will eventually surface a failure mode that corresponds to that dimension.

Data sovereignty. Where does the data live, where do the models run, and who owns the weights? Answers that depend solely on a third-party API will not survive EU AI Act, UAE PDPL, or Saudi PDPL scrutiny in 2026.

Deployment flexibility. Can the platform run on public cloud, dedicated cloud, on-premise, and air-gapped environments, or is it cloud-only? Most enterprise portfolios require all four.

Domain depth. Are the models trained or fine-tuned on industry-specific data — financial documents, emissions factors, clinical workflows, government case data — or are they generic? Generic models consistently under-perform on regulated, specialised tasks.

Integration breadth. How many existing enterprise systems does the platform connect to out of the box? The answer scales from "a few connectors" to "10,000-plus integrations." The gap decides implementation cost.

Governance and compliance. Does the platform implement the NIST AI RMF functions — GOVERN, MAP, MEASURE, MANAGE — and align with ISO/IEC 42001 and EU AI Act requirements? Is this demonstrable in audit?

Vendor independence. Does the architecture avoid hard lock-in — model portability, data portability, open standards, and clear exit paths? Under Gartner's sovereignty outlook, lock-in is now a board-level risk.

Total cost of ownership. What is the fully-loaded five-year cost — platform licences, implementation, integration, change management, and operations — not just the price on the order form? Most disappointing enterprise AI projects misprice Years 2–5.

Evaluated together, these seven dimensions predict nearly all of the post-deployment variance in enterprise AI outcomes. MIT's NANDA research links most pilot failures to weaknesses in governance, integration, and deployment fit — not to weaknesses in model capability (MIT, 2025).

Regulatory and Governance Requirements in 2026

Regulation has moved from background concern to primary constraint in enterprise AI procurement. Four regimes matter directly in 2026.

The EU AI Act (Regulation EU 2024/1689) is the most consequential. It entered into force on 1 August 2024; prohibited practices have been enforceable since 2 February 2025; obligations for general-purpose AI (GPAI) models have applied since 2 August 2025; and high-risk Annex III systems — including AI used for employment, credit scoring, essential services, law enforcement, education, and migration — must meet full compliance by 2 August 2026. Penalties reach €35 million or 7% of global turnover for prohibited practices, and €15 million or 3% for high-risk non-compliance. Non-EU companies serving the EU market are in scope. The European Commission's November 2025 Digital Omnibus proposal contemplates conditional deferral to late 2027, but until formally adopted, 2 August 2026 remains the binding deadline.

The NIST AI Risk Management Framework 1.0, published in January 2023, defines four functions — GOVERN, MAP, MEASURE, and MANAGE — that have become the de facto governance template for US enterprises and federal agencies, and for multinationals aligning US and EU regimes. NIST's AI RMF is voluntary but is referenced in US federal procurement and state-level AI laws.

ISO/IEC 42001:2023, published in December 2023, is the world's first AI Management System (AIMS) standard. Certification to ISO/IEC 42001 gives enterprises a third-party-auditable baseline that maps cleanly to the EU AI Act's governance and risk-management requirements and is increasingly referenced in enterprise RFPs.

Regional and sector-specific frameworks — the UAE PDPL, Saudi Arabia PDPL, the UK's AI Safety Institute guidance, Singapore's Model AI Governance Framework, and industry regulators in financial services and healthcare — add further overlays. Enterprises selling across jurisdictions need their AI platform to prove compliance with multiple regimes simultaneously, which is a key reason proprietary, sovereignty-capable AI infrastructure is displacing single-vendor consumer APIs in regulated segments.

Build vs. Buy: The Enterprise AI Decision

Build-versus-buy is the most contested decision in enterprise AI procurement, and the evidence now points in a consistent direction. MIT NANDA's 2025 analysis found that external-partnership deployments reach production roughly 67% of the time, compared with only 33% for internal builds — a 2× advantage for buying from specialised providers over building in-house (MIT, 2025). Employee usage rates were also nearly double for externally delivered systems.

The decision is rarely binary in practice. The typical enterprise pattern is buy the platform, configure the applications, and build the differentiators — adopt a vendor platform for Layers 1–3 of the stack, configure the application layer to match specific workflows, and reserve internal engineering capacity for the narrow set of capabilities that genuinely differentiate the business. This pattern captures the speed and quality benefits of vendor investment while preserving control over the few components where scale does not confer advantage.

Purely internal builds now rarely make sense outside a small set of frontier R&D labs and specific national-security workloads. The capex and opex required to match a credible enterprise AI vendor — in data integrations, model engineering, regulatory adherence, and operations — consistently exceed what an internal team can sustain against competing engineering priorities.

Implementation Timeline and Realistic ROI Expectations

Credible enterprise AI implementations follow a predictable cadence. Discovery and use-case selection typically take 4–8 weeks; infrastructure and data-platform stand-up 8–16 weeks; first production workload 12–20 weeks from kick-off; scaled rollout across multiple business functions 9–18 months. Mid-market organisations in MIT's NANDA dataset reached full implementation in an average of 90 days for their first use case; large enterprises averaged nine months for equivalent scope, largely because of procurement, change-management, and integration overhead (MIT, 2025).

Realistic ROI expectations, grounded in McKinsey's November 2025 survey, are that 39% of organisations report some EBIT impact at the enterprise level, with most of that impact concentrated in the 6% of "AI high performers" (McKinsey, 2025). The single most important predictor of whether an enterprise reaches that 6% is the discipline of its deployment — scope selection, integration depth, governance, and change management — rather than the raw capability of the underlying model. IDC's February 2026 forecast frames the same point in industry terms: "We are entering a new phase of the AI-everywhere journey: the era of expectations and reckoning" (IDC, 2026). The winners in this phase are buyers who measure results, not activity.

Net0's Approach to Enterprise AI

Net0 is an AI infrastructure company headquartered in Dubai and Monaco, founded in 2021 by Dmitry Aksenov and Sofia Fominova. The company builds enterprise and government AI solutions end-to-end — each deployment is scoped, architected, integrated, configured, and operated by Net0 for the specific customer's data estate, regulatory jurisdiction, and operational model.

Three properties define Net0's approach to enterprise AI.

First, AI-first, not AI-added. Net0's platform is built on the three-layer AI architecture described in detail in the company's architecture overview — a data platform, proprietary AI models, and more than 60 modular AI applications. Every layer is designed for AI from the ground up rather than retrofitted onto a legacy product.

Second, proprietary, sovereign-capable infrastructure. Net0 builds its own AI models rather than reselling third-party APIs — the reasoning is laid out in the analysis of proprietary AI infrastructure. Owning the model stack is the precondition for sovereign, hybrid, and on-premise deployment; it is also how Net0 serves regulated industries and national governments under the EU AI Act, UAE and Saudi PDPLs, and NIST AI RMF regimes.

Third, end-to-end, custom-configured or custom-built. Net0 does not ship a generic SaaS product. Each engagement assembles the platform's components — automated data collection, the unified data fabric, proprietary models, and modular applications — into a deployment that matches the customer's operating environment. New components are built where no existing match exists in the library of 60+ applications.

Net0 serves more than 400 entities across four continents, including Fortune 500 enterprises and national governments. The same architecture underpins the AI-powered sustainability platform, the government AI programmes described in the 2026 government AI playbook, and enterprise deployments across finance, operations, and risk. For broader context on intelligence layers above raw data, see the analysis of AI for sustainability intelligence, and for agentic concepts shared across enterprise and government workloads, the agentic government glossary. Common procurement questions are covered in the Net0 FAQ.

Book a demo to scope an enterprise AI deployment against your organisation's data estate, regulatory jurisdiction, and operational model.

FAQ

What are enterprise AI solutions?

Enterprise AI solutions are integrated platforms that combine infrastructure, data integration, proprietary and fine-tuned AI models, and modular applications to run AI at the scale, security posture, and compliance standard a large enterprise or government requires. They differ from consumer AI tools on integration depth, governance, deployment flexibility, and service model.

How much do enterprise AI platforms cost?

Total cost of ownership includes platform licences, implementation, integration, change management, and operations over a typical five-year horizon. Gartner forecasts global AI software spend of $452 billion in 2026, up from $283 billion in 2025 (Gartner, January 2026). Enterprise engagements are typically priced as platform plus implementation rather than per-seat.

How long does enterprise AI implementation take?

Discovery and use-case selection typically take 4–8 weeks, infrastructure stand-up 8–16 weeks, and first production workload 12–20 weeks. Mid-market organisations average 90 days to first full deployment; large enterprises average nine months for equivalent scope (MIT NANDA, 2025). Scaled rollout across multiple functions usually takes 9–18 months.

What is the difference between an enterprise AI platform and a generic AI tool?

Enterprise AI platforms integrate with the enterprise data and identity estate, support sovereign and hybrid deployment, enforce governance and audit requirements, and are typically delivered as end-to-end systems. Generic AI tools — ChatGPT, Copilot, and similar — enhance individual productivity but do not provide the integration depth, governance, or deployment flexibility required for production enterprise workloads.

Can enterprise AI be deployed on-premise or in a sovereign environment?

Yes. Modern enterprise AI platforms support public cloud, private cloud, hybrid, sovereign, and air-gapped deployment. Sovereign and on-premise options are increasingly required for regulated industries and governments under the EU AI Act, UAE PDPL, and Saudi PDPL. Vendor platforms that run only on third-party APIs cannot satisfy these rules in full.

How does the EU AI Act affect enterprise AI in 2026?

The EU AI Act's high-risk obligations under Annex III — covering employment, credit scoring, essential services, law enforcement, education, migration, and administration of justice — are fully enforceable from 2 August 2026. Penalties reach €35 million or 7% of global turnover for prohibited practices and €15 million or 3% for high-risk non-compliance. Non-EU companies serving the EU market are in scope.

How do you evaluate enterprise AI vendors?

A rigorous evaluation covers seven dimensions: data sovereignty, deployment flexibility, domain depth, integration breadth, governance and compliance, vendor independence, and total cost of ownership. Each dimension predicts a distinct post-deployment failure mode. Feature-checklist evaluations that ignore these structural questions consistently under-perform.