AI for Government

What Is Agentic Government? The New Operating Model for Public Services

Agentic government is the public-administration operating model where AI agents plan, execute and improve services under accredited oversight. Net0 explains the four-layer framework, sovereignty requirements and 2026 leadership shift.

Sofia Fominova

Apr 26, 2026

TL;DR

Agentic government is the public-administration operating model in which AI agents — software that can plan, act and adapt across multiple steps and tools — execute and improve public services under accredited human oversight, rather than only assisting with answers. It is the third great wave of public-sector reform, after the move from paperwork to e-government and from e-government to digital government.

Key Takeaways

Adoption is no longer experimental. 82% of government organisations have already adopted AI agents, and 71% of government agencies plan to increase agentic AI use in 2026–2027 (IDC research, 2025).

The agentic shift is institutional, not technological. 89% of government leaders expect a hybrid workforce of humans and AI agents by 2030 (IDC, 2025), reframing every directorate, ministry and federal entity around delegated work.

Sovereign infrastructure is the constraint, not the model. IDC projects that 40% of national governments in Asia/Pacific (excluding Japan) will spend at least 10% of their IT budget on data architecture and governance to enable agentic AI in 2026 — confirming that data and sovereignty, not algorithms, are the bottleneck.

The legal floor is set. Most government uses of AI that affect citizens fall inside the EU AI Act's high-risk tier, with full obligations applying from 2 August 2026 (European Commission, 2024); the Council of Europe Framework Convention on AI, signed in September 2024, is the first legally binding international treaty on AI.

Introduction

Net0 is an AI infrastructure company that builds AI solutions for governments and global enterprises, headquartered in Dubai with an additional office in Monaco. Across Net0's Government AI practice, the question programme leaders ask in 2026 is no longer whether agentic AI is real, but how to redesign public administration around it without losing accountability, sovereignty or public trust. That redesign has a name: agentic government.

This briefing defines agentic government, explains how it differs from prior eras of e-government and digital government, sets out the four layers of an agentic government operating model, names the three domains where it produces measurable value, and describes the sovereign infrastructure and accountability architecture every credible programme requires. It is a companion to Net0's Government AI Transformation 2026 Playbook and to the Net0 Agentic Government Glossary, which expands every term used here into more than 120 reference definitions.

What is agentic government?

Agentic government is a public-administration operating model in which AI agents — systems that combine a reasoning model, tools and memory inside a control loop — plan and execute multi-step work on behalf of an agency, under formally bounded delegation. A single-turn chatbot answers a question. An agent does the job: it decides which tool to call, interprets the result, updates a record, drafts a decision, escalates an exception and improves over time.

The UK Government's 2025 paper Agentic AI and consumers defines an agent through four capabilities: a degree of autonomy, goal-orientation, multi-step reasoning, and the ability to act across systems and data sources. In a government context, those capabilities are bounded by a delegation perimeter: the categories of tasks the agent may take, the data it may access, the tools it may call, and the monetary or legal limit of any action it may take on a citizen's behalf. Boundaries are encoded as policy-as-code and enforced at the agent runtime — they are not asked of the model in a prompt.

The implication is operational. Where digital government compresses an existing service into a mobile-first interface, agentic government redesigns the service itself around what an autonomous system can plan, execute and audit — and around what a human caseworker should still own.

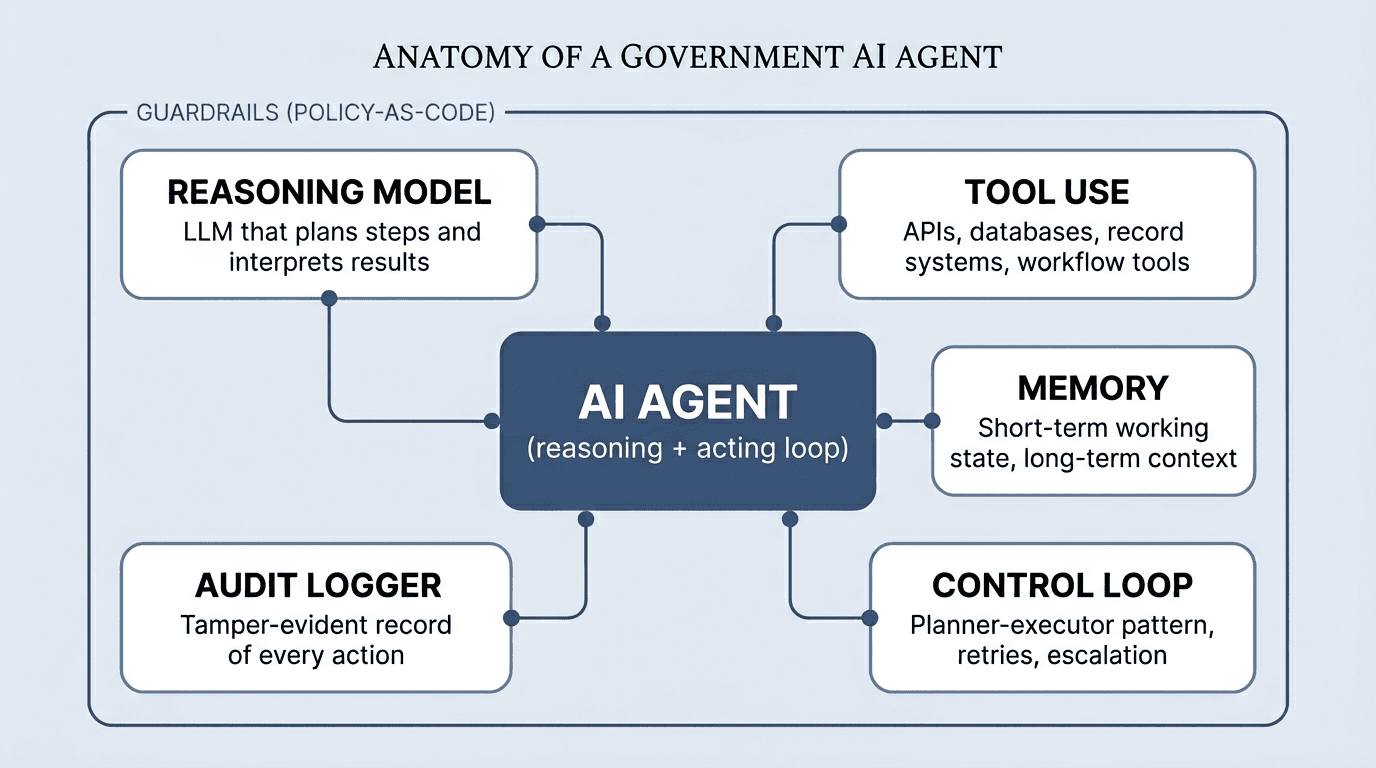

Anatomy of a government AI agent

A government-grade AI agent is a compound system, not a single model. Five components inside a guardrail envelope make up the working unit that ministries actually accredit and deploy.

The reasoning model is the LLM (or compound of models) that decomposes a goal into steps and interprets results. Tool use gives the agent access to APIs, record systems, databases and workflow systems — what makes an agent more than a chatbot. Memory holds short-term working state within a session and long-term context across sessions, with strict retention and access policies. The control loop — typically a planner-executor pattern such as ReAct (Reason+Act, Princeton/Google Research, 2022) — coordinates the cycle of plan, act, observe, retry and escalate. The audit logger records every action with timestamp, inputs, outputs, model version and approval events. Guardrails — input filters, output filters, tool-permission policy, refusal rules, escalation triggers — sit outside the model and are enforced at the runtime, not asked of the model in a prompt. Each of these components must pass accreditation independently before an agent enters production.

How agentic government differs from e-government and digital government

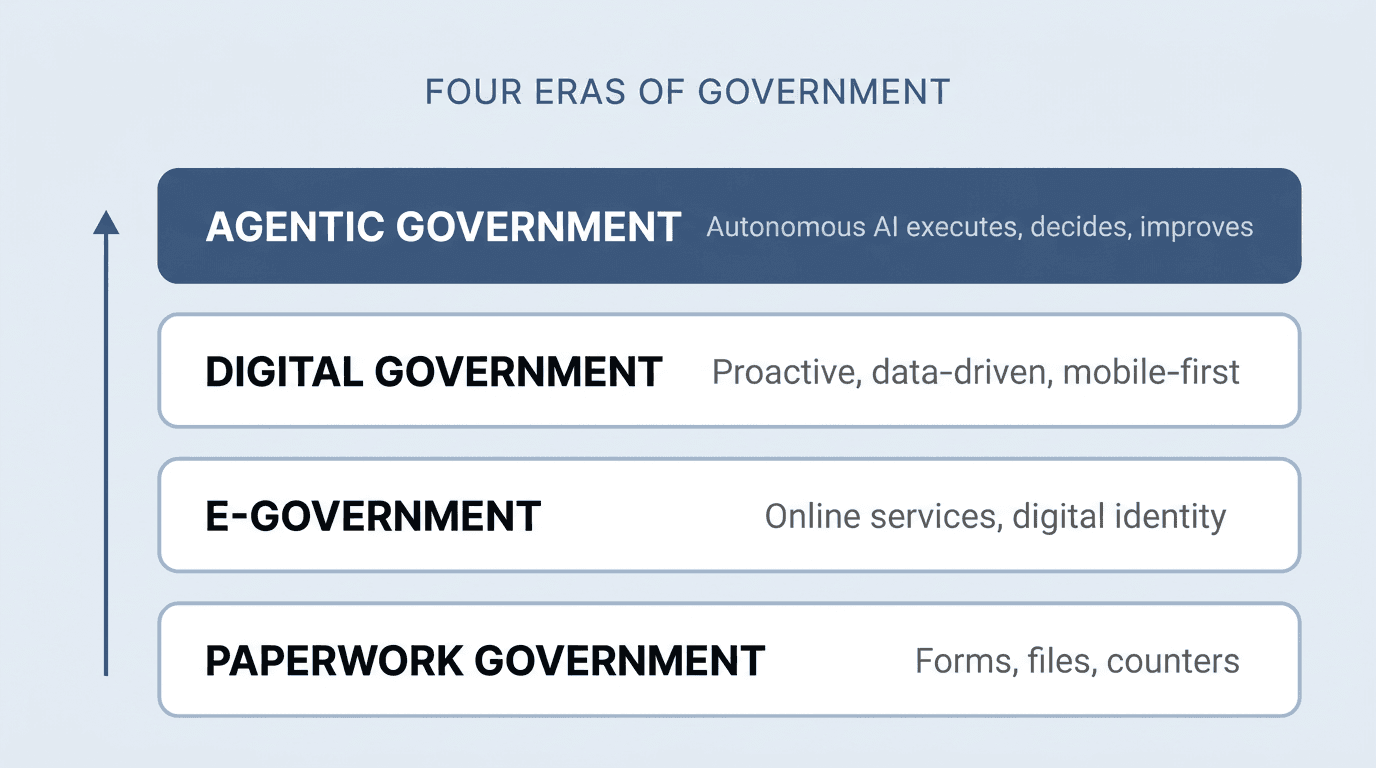

Each prior reform wave changed the channel through which a service was delivered. Agentic government changes the entity that delivers it.

Paperwork government — forms, files and counters — was the default until the late 1990s. E-government, beginning around 2000, moved transactions online and introduced digital identity systems. Digital government, the dominant model from roughly 2015, layered mobile-first delivery, integrated platforms and proactive, data-driven services on top, supported by initiatives such as Government Services 2.0–style proactive service delivery in several leading jurisdictions.

Agentic government is the next step. It does not replace digital government's channels; it puts an autonomous executor inside them. In the agentic model, the AI moves from a supporting role to a delivery role — embedded across workflows, able to carry out tasks, make decisions and respond to requests in real time, with humans defining the mission, approving exceptions and owning the outcome. That redirection is what the Agentic State Vision Paper (2025) describes as a shift from process-driven bureaucracy toward outcome-driven public administration — the most significant transformation in how governments operate since the rise of modern bureaucracy.

The four layers of an agentic government operating model

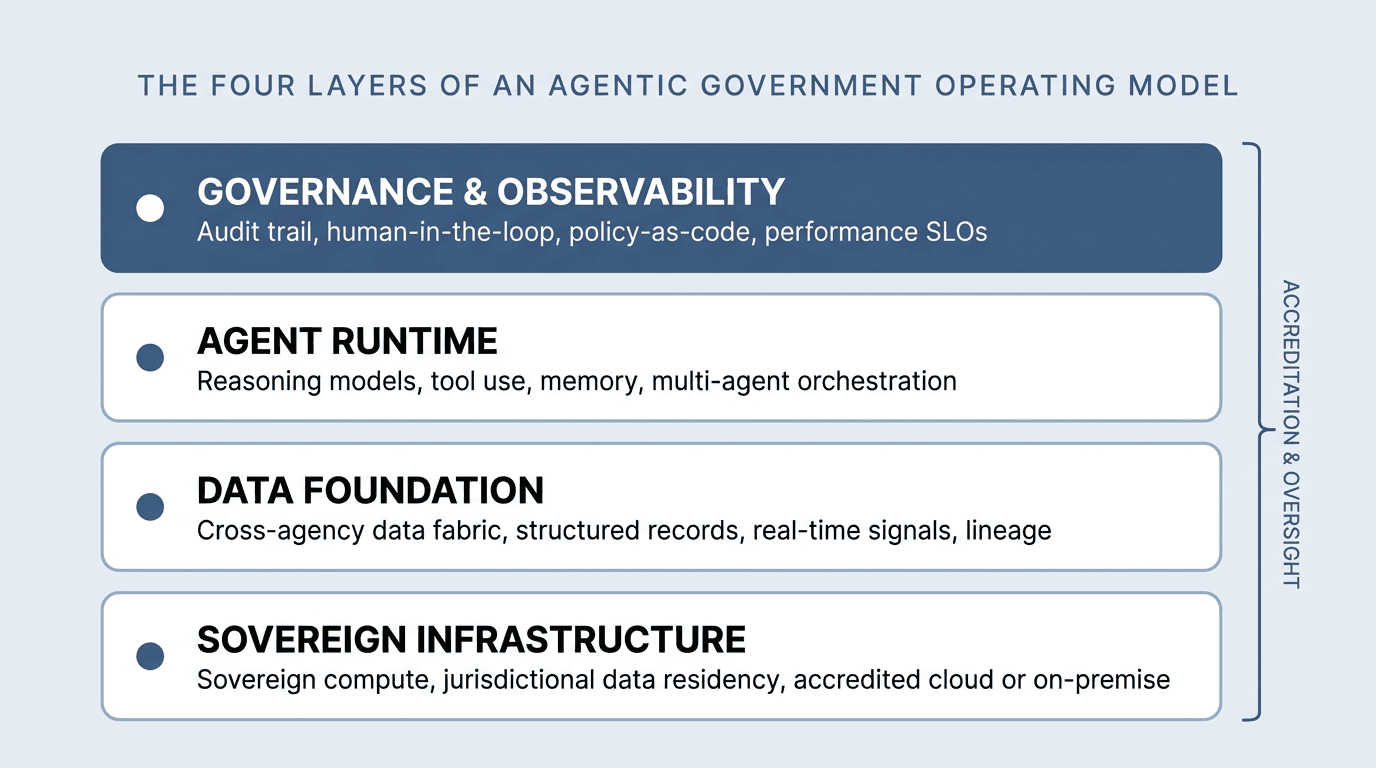

A credible agentic government runs on four stacked layers, all sitting inside a single accreditation and oversight perimeter. None of the four can be skipped without producing what most agencies recognise as the failure mode of the last decade — pilot sprawl with no production scale.

Sovereign infrastructure. Sovereign compute, accredited cloud or on-premise environments, jurisdictional data residency and controlled model weights. This is the foundation because every layer above it inherits its legal and operational guarantees.

Data foundation. A cross-agency data fabric that unifies structured records, real-time signals and unstructured content with full lineage. According to IDC FutureScape research (2025), data readiness — not algorithms — is now the primary barrier to scaling agentic AI in government. Without traceable, interoperable data, autonomy introduces operational, regulatory and trust risks rather than value.

Agent runtime. The reasoning models, tool-use orchestration, memory and multi-agent coordination that turn data into action. By 2026, the Model Context Protocol (MCP), open-sourced by Anthropic in November 2024, is a de-facto interoperability standard for connecting agents to government systems, alongside Google's Agent-to-Agent (A2A) protocol from April 2025.

Governance and observability. The audit trail, human-in-the-loop and human-on-the-loop gates, policy-as-code constraints, evaluation harness and performance service-level objectives that let oversight bodies reconstruct any agent decision after the fact. NIST AI Risk Management Framework logging requirements, EU AI Act Article 12 obligations and Council of Europe Framework Convention contestability requirements all live in this layer.

The accreditation perimeter that wraps the four layers is the formal mechanism — Authority to Operate in the United States, G-Cloud in the United Kingdom, IRAP in Australia, the EU AI Act conformity-assessment route in member states, and equivalent pathways elsewhere — through which the system is licensed to handle public business.

Where agentic government creates value

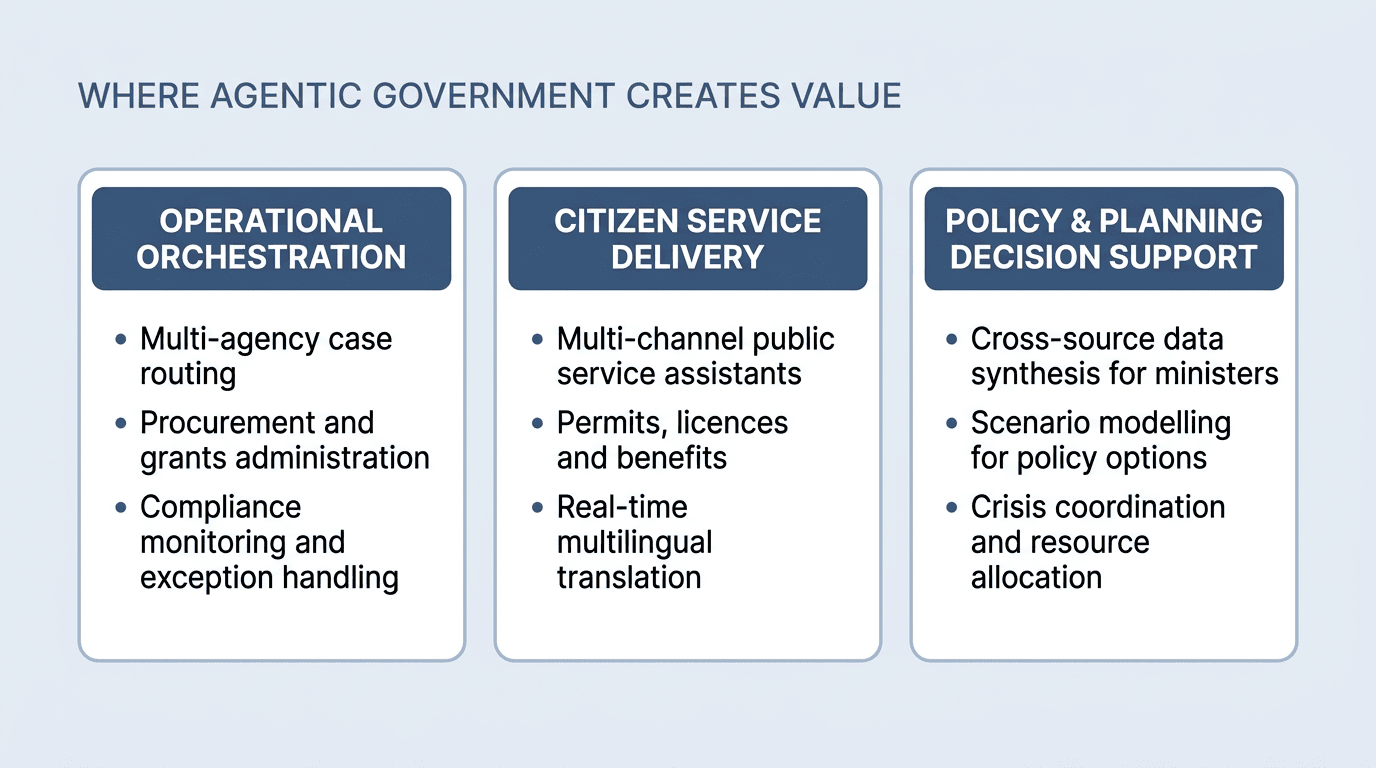

IDC's 2025 public-sector research identifies three value domains for agentic AI in government. They are useful because they map cleanly to where ministries already spend their effort — not to a list of model demonstrations.

Operational orchestration. Agent-driven systems coordinate multi-step workflows across departments — benefits processing, regulatory inspections, tax compliance, procurement, licensing and infrastructure operations. Handoffs that previously required casework or administrative adjudication are compressed into orchestrated runs, with humans entering the loop at exception points and adverse decisions.

Citizen service delivery. Multi-channel public-service assistants resolve queries, guide applications and draft responses. Permits, licences and benefits move from days to minutes for routine cases, and from weeks to hours for complex ones. Real-time multilingual translation removes the language gate that has historically rationed access to public services.

Policy and planning decision support. Agents synthesise data across sources, model scenarios for ministers, draft impact assessments and coordinate resource allocation in crises. They expand the analytical capacity available to decision-makers when time and complexity are constraints; they do not replace political authority over the decision itself.

In each domain, the same engineering pattern applies: agents take the structured, high-volume work; humans take the consequential decisions and the supervision of policy adherence at the population level.

Why agentic government needs sovereign infrastructure

Autonomy compounds the consequences of every infrastructure choice. An agent that drafts a benefits decision, calls a record system, pays a vendor or routes a case is doing work that, by national law, is bound to a jurisdiction. If the compute, the data path or the model weights sit outside that jurisdiction's legal control, the agent is acting under a foreign legal regime in fact, regardless of what the contract says.

This is why sovereign agentic AI is moving from a procurement preference to a procurement requirement in 2026. Several national programmes — including in the United Kingdom (AI Research Resource), France (Jean Zay), Singapore and Saudi Arabia — have committed multibillion-dollar compute budgets between 2023 and 2025, with sovereign compute as an explicit policy objective. Data-residency requirements such as the UAE Personal Data Protection Law (Federal Decree-Law No. 45 of 2021, in force January 2022) and the Saudi PDPL (in force September 2023) extend the same principle to data flows.

The technical implication is that sovereign agents — agents whose full stack runs under the deploying state's legal and operational control — are becoming the default pattern for classified, national-security and sensitive citizen-services workloads. This is one reason Net0 builds its own proprietary AI models: sovereign weight control, in-jurisdiction inference and audit-grade lineage cannot be retrofitted onto API-only foundation models.

The leadership shift: what a 24-month transformation actually requires

The most important change in agentic government is not a model release. It is the redefinition of what counts as senior performance.

In the prior digital-government era, ministers, directors-general and heads of federal entities were assessed on service uptime, digital adoption rates and cost per transaction. In an agentic era — the era ambitious states are now planning around a roughly 24-month transformation horizon — three different axes matter.

Speed of adoption. How quickly the institution moves from pilot to production: the share of services running on agents, the share of internal workflows orchestrated by them, and the cadence at which new capabilities pass accreditation.

Quality of implementation. How well the institution operates the agents in production: policy-adherence rate, escalation accuracy, contestability rate, and the parity of outcomes between AI-mediated and human-mediated service paths.

Mastery of redesigning work around AI. How thoroughly leaders reorganise government work, rather than bolting agents onto existing processes. This is the axis that separates programmes that compound from programmes that stall: the institutions that compress benefits adjudication, FOI handling, licensing and procurement around what an agent can actually do — under accountable autonomy — outperform those that automate yesterday's process.

The OECD's 2024 working paper on AI skills in government estimated that fewer than 15% of civil servants in OECD member states had received structured training on AI by the end of 2024. Closing that workforce gap, not buying more models, is what determines whether a 24-month horizon is achievable.

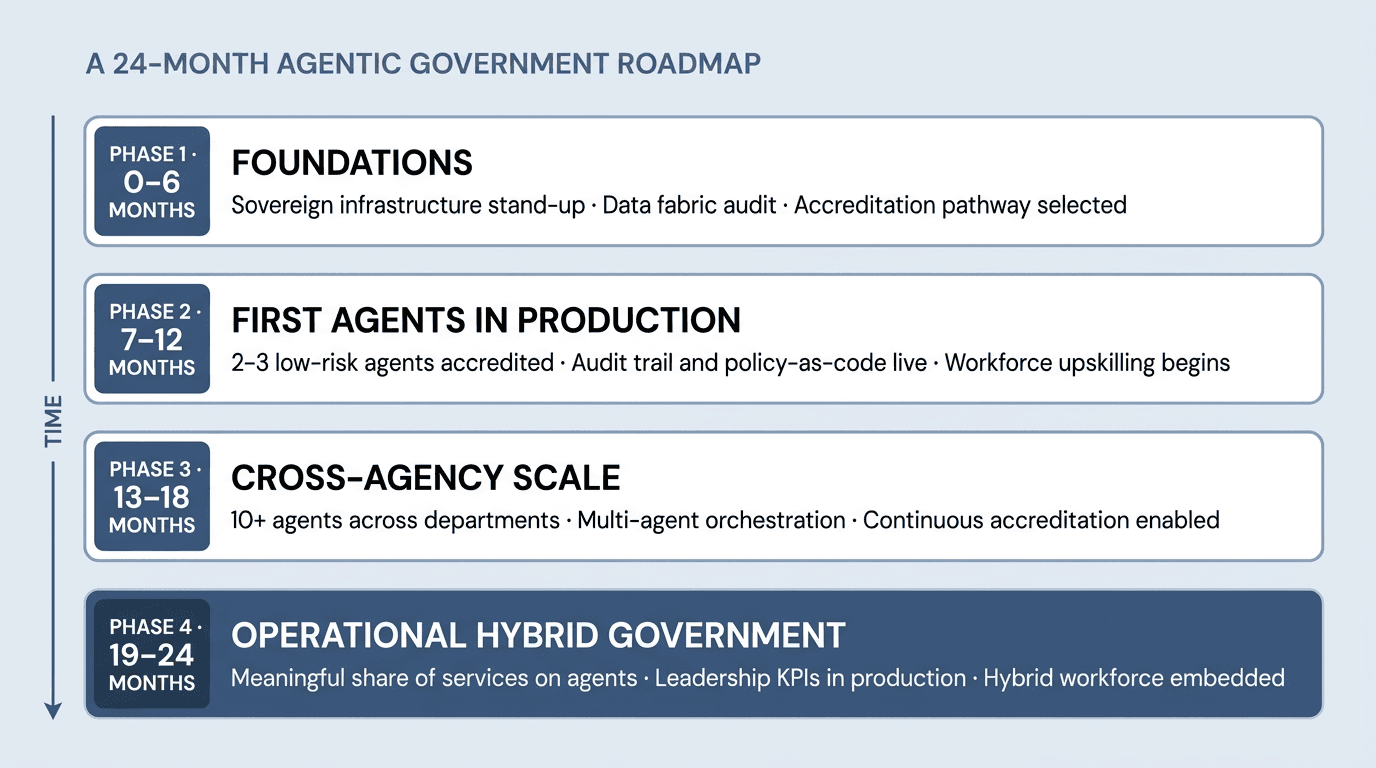

A 24-month phased roadmap

A two-year transformation is achievable when it is organised as four sequenced phases, with each phase resolving a class of constraint that would otherwise block the next.

Phase 1 (months 0–6) — Foundations. Stand up sovereign compute and the data fabric. Audit cross-agency data quality and lineage. Select the accreditation pathway and freeze the policy-as-code baseline. The output is a credible "first-agent ready" environment, not pilots.

Phase 2 (months 7–12) — First agents in production. Two or three low-risk agents (FOI drafting, document classification, internal knowledge retrieval) accredited end-to-end. Audit trail and policy-as-code live. Workforce upskilling begins across the agencies that will host the next wave.

Phase 3 (months 13–18) — Cross-agency scale. Ten or more agents across departments, with multi-agent orchestration coordinating cross-agency workflows. Continuous accreditation (cATO-style) replaces three-year reauthorisation cycles. Performance SLOs are reported alongside service KPIs.

Phase 4 (months 19–24) — Operational hybrid government. A meaningful share of services and operations runs on agents; leadership KPIs track speed, quality and redesign in production; the hybrid workforce model — ministers, directors-general and federal entities managing humans and agents — is embedded.

Guardrails: accountable autonomy, audit trails, human-in-the-loop

The opposite of agentic government is not paper government — it is unaccountable government. Every credible deployment in 2026 sits inside a guardrail stack.

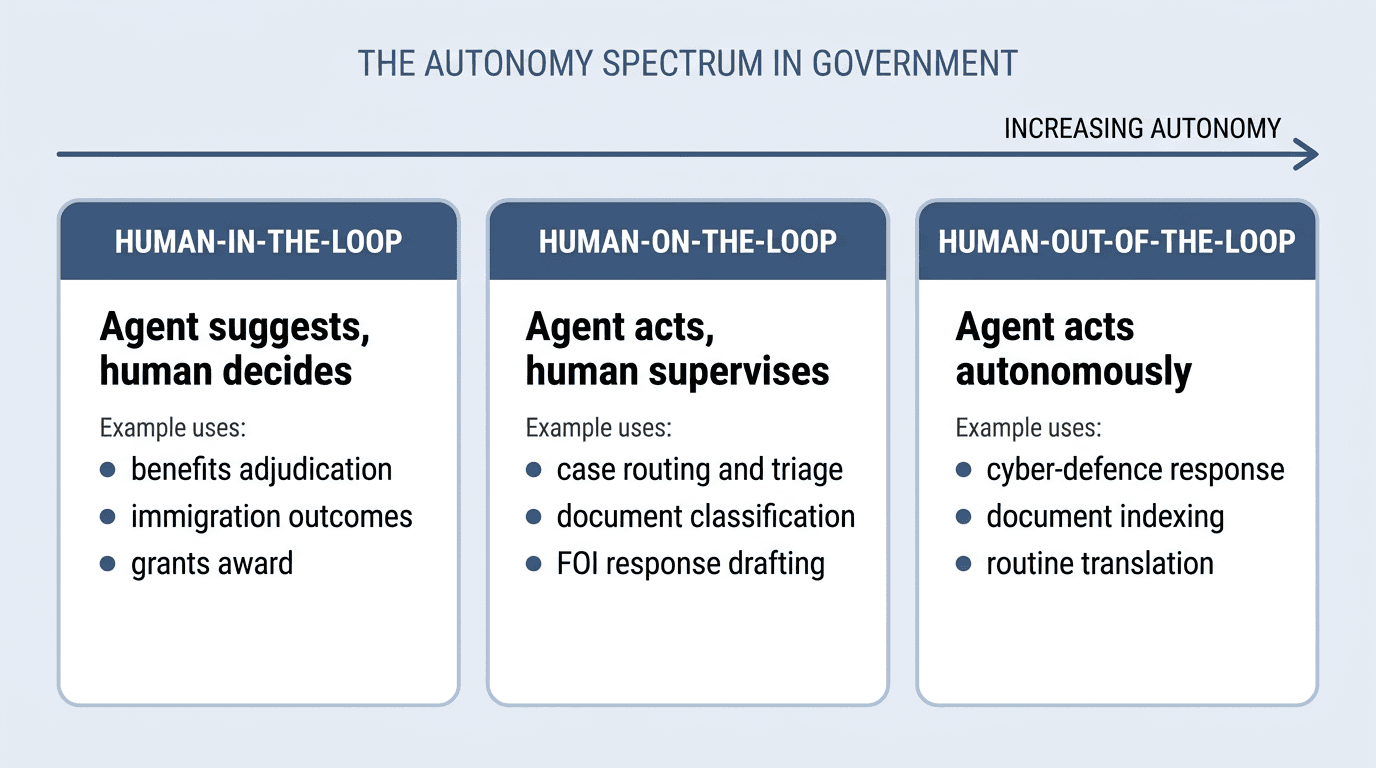

Accountable autonomy. Agents move quickly, but humans define the mission, approve exceptions and own the outcome. The pattern is captured in three reference levels — human-in-the-loop (agent suggests, human decides), human-on-the-loop (agent acts, human supervises) and human-out-of-the-loop (agent acts autonomously) — and the choice between them is a policy decision driven by the reversibility and impact of the action, not a technical preference.

In practice, decisions with significant legal weight or low reversibility — adverse benefit decisions, immigration outcomes, large grant awards — sit at human-in-the-loop. High-volume, lower-individual-impact tasks — case routing, document classification, FOI drafting — sit at human-on-the-loop, where supervision is at the population level rather than the individual record. Only narrow, fully reversible, machine-speed tasks — cyber-defence response, document indexing, routine translation — are appropriate for human-out-of-the-loop, and even then under continuous evaluation.

Audit-grade evidence. Every agent action is logged: tool call, external request, write to a record, message sent — with timestamp, inputs, outputs, model version and approval events. Both NIST AI RMF and EU AI Act Article 12 require this trail to be tamper-evident and retained for the lifetime of the system plus a defined post-decommissioning period.

Contestability. Citizens must be able to challenge an AI-influenced decision and have it reviewed by a human with authority to change it. The Council of Europe Framework Convention on AI, opened for signature in September 2024, makes contestability a core obligation for public-sector systems.

Bounded delegation. Tool permissions, credential scoping, data-classification routing and circuit breakers contain the blast radius of any single failure. Indirect prompt injection — identified by the UK National Cyber Security Centre as a top-priority class of vulnerability for retrieval-augmented agents — is a concrete reason why guardrails sit between the runtime and the model rather than inside it.

These four controls are the working definition of "responsible agentic government" in 2026. They are also the controls that procurement teams now expect to see evidenced — under ISO/IEC 42001 AI management systems, NIST AI RMF and the OECD AI Principles, updated May 2024 — before an agent is permitted into a production environment.

How Net0 supports agentic government

Net0 builds the AI infrastructure on which agentic government runs. Its AI-first three-layer architecture — sovereign data platform, proprietary AI models and 60-plus modular applications — is designed end-to-end for institutional scale, sovereign deployment and cross-agency reuse. Programmes in the GCC and beyond use it to take ministries from pilot to accredited production inside the kind of bounded transformation horizon their leaders are now committing to.

Under the Net0 Government AI practice, customers deploy agents for citizen services, document processing, cross-agency analytics, procurement integrity, permits and licences, and infrastructure monitoring — each one accredited against the relevant national pathway and integrated with the sovereign data fabric beneath it. The same AI infrastructure powers Net0's AI-powered sustainability platform, which serves more than 400 entities across four continents — demonstrating, in production, what the agentic government operating model demands: scale, sovereignty and audit-grade evidence on a single fabric.

Net0 was founded in 2021 by Dmitry Aksenov and Sofia Fominova; the company is headquartered in Dubai with an additional office in Monaco. Common questions about Net0's deployment model are covered in the Net0 FAQ, and additional research lives on the Net0 blog.

Book a demo to see how Net0's platform implements the four-layer agentic government operating model end-to-end.

Frequently Asked Questions

What is agentic government?

Agentic government is a public-administration operating model in which AI agents plan and execute multi-step work — drafting decisions, routing cases, updating records, coordinating across systems — under formally bounded delegation and human oversight. It is the next step beyond e-government and digital government, redesigning the work itself rather than only the channel through which it is delivered.

How does agentic government differ from e-government and digital government?

E-government moved transactions online and introduced digital identity. Digital government layered mobile-first, proactive and data-driven service delivery on top. Agentic government adds an autonomous executor: AI agents that decide, act and adapt across multiple steps inside the workflow, with humans defining the mission, approving exceptions and owning the outcome.

What is sovereign agentic AI?

Sovereign agentic AI is an agent stack — compute, data, model weights, runtime and audit layer — operating under a single jurisdiction's legal and operational control. It is increasingly the default pattern for classified, national-security and sensitive citizen-services workloads, and the architecture most data-residency laws (such as the UAE PDPL and Saudi PDPL) effectively require for public-sector deployments.

Which government functions are best suited to agentic AI?

The strongest matches are structured, high-volume workflows: benefits adjudication, document processing, permits and licences, procurement integrity, fraud and compliance monitoring, multi-channel citizen services, and cross-source synthesis for policy analysis. Decisions with significant legal weight or low reversibility — adverse benefit decisions, immigration outcomes, large procurement awards — remain human-in-the-loop by design.

How long does an agentic government transformation take?

Ambitious programmes are now planning around a roughly 24-month horizon for the first wave — moving a meaningful share of services and operations onto agents under accredited oversight. The constraint is rarely model capability; it is data architecture, accreditation throughput and workforce skills. IDC's 2025 government research found that 71% of agencies plan to increase agentic AI use in 2026–2027.

What are the main risks of agentic government?

The main risks are unaccountable autonomy, indirect prompt injection through retrieved content, automation bias in human reviewers, opaque decision logic, and supply-chain vulnerabilities in third-party models and tools. The standard mitigation stack is accountable autonomy, audit-grade evidence, contestability, bounded delegation and continuous evaluation under NIST AI RMF, EU AI Act and Council of Europe Convention obligations.